Urawa Zero/Flickr

For centuries, philosophers have been using moral intuitions to reason about ethics. Today, some scientists think they’ve found a way to use psychology and neuroscience to undermine many of these intuitions and advance better moral arguments of their own. If these scientists are right, philosophers need to leave the armchair and head to the lab – or go into retirement.

The thing is, they’re wrong. There are certainly problems with the way philosophers use intuitions in ethics, but the real challenge to moral intuitions comes from philosophy, not from science.

How do ethicists use intuitions? The idea is that moral intuitions about particular cases are reliable sources of moral knowledge, or at least good evidence for or against moral claims. To assess whether a moral theory is true, philosophers formulate cases that call for particular moral choices and ask which choice seems, intuitively, like the right one. When the choice that seems right is the choice the theory calls for, this is a reason to accept the theory. If it seems like the right choice is one the theory doesn’t endorse (or even condemns), that’s a reason to reject the theory. Moral philosophers do this all the time (see, for example, this discussion of arguments for effective altruism, or this one on the moral permissibility of having children).

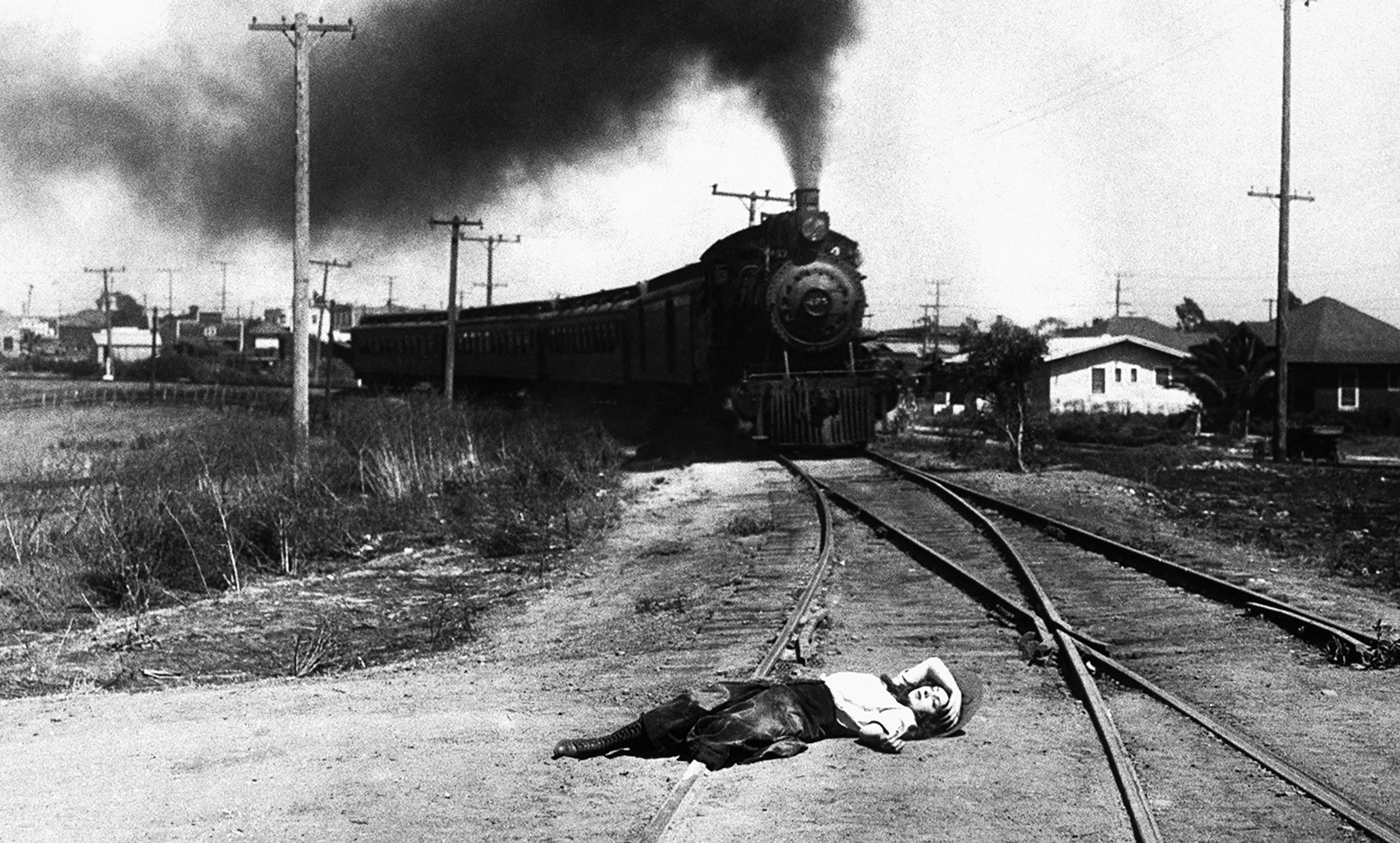

The ‘trolley problem’ is the best, most ubiquitous example of this kind of philosophy. Beginning with Philippa Foot and Judith Thomson, philosophers have invited readers to imagine that a trolley is speeding down a track. Unimpeded, the trolley will hit five people ahead of it, killing them, but an innocent person nearby could stop it. In one version, she could stop the trolley and save the five people by pulling a lever to divert it to another track, but this would kill one person who happens to be on that track. In another, she can only stop the trolley from killing the five by pushing someone off a bridge into the trolley’s path. Whatever the details, the moral question is what the person should do.

Ethicists will then cite people’s intuitions about the problem as evidence in the debate between the two most popular types of moral theories, consequentialist and deontological. Consequentialist moral theories hold that what’s right is a function of what’s good: the right thing to do is whatever would produce the best consequences. In contrast, deontological moral theories hold that the right has priority over the good: it could well be wrong to perform the action that has the “best” consequences when that action breaks the moral rules. In trolley cases, consequentialists typically say that you should be willing to kill one to save five, but deontologists say that you shouldn’t.

In the past few years, scientists have argued that there is a fatal problem with this approach. Recent research, they say, suggests that many of our moral intuitions come from neural processes responsive to morally irrelevant factors – and hence are unlikely to track the moral truth.

The psychologist Joshua Greene at Harvard led studies that asked subjects hooked up to fMRI machines to decide whether a particular action in a hypothetical case was appropriate or not. He and his collaborators recorded their subjects’ responses to many cases. They found that typically, when responding to cases in which the agent harms someone personally (say, trolley cases in which the agent pushes an innocent bystander over a bridge to stop the trolley from killing five other people), the subjects showed more brain activity in regions associated with emotions than when responding to cases in which the agent harmed someone relatively impersonally (like trolley cases in which the agent diverts the trolley to a track on which it will kill one innocent bystander to stop the trolley from killing five other people). They also found that the minority of subjects who said the agent acted appropriately in doing harm in the personal cases took longer to give this verdict, and experienced greater brain activity in regions associated with reasoning than the majority who said otherwise.

According to Greene, this indicates that our moral intuitions in favour of deontological verdicts about cases – that you should not harm one to save five – are generated by more emotional brain processes responding to morally irrelevant factors, such as whether you cause the harm directly, up close and personal, or indirectly. And our moral intuitions in favour of consequentialist verdicts – that you should harm one to save five – are generated by more rational processes responsive to morally relevant factors, such as how much harm is done for how much good.

As a result, we should apparently be suspicious of deontological intuitions and deferential to our consequentialist intuitions. This research thereby also provides evidence for a particular moral theory: consequentialism. Unsurprisingly, consequentialists such as Peter Singer are enthusiastic about Greene’s approach. Singer himself argues that these results make sense in light of evolutionary psychology: we developed our prevailing range of emotional responses because it was conducive to evolutionary fitness, but there’s no reason to think that what’s adaptive aligns with what’s morally true. Other psychologists and neuroscientists see in Greene’s approach the beginnings of a scientific way to answer the questions philosophers have most closely guarded.

Greene’s results, however, don’t offer any scientific support for consequentialism. Nor do they say anything philosophically significant about moral intuitions. The philosopher Selim Berker at Harvard has offered a decisive argument why. Greene’s argument just assumes that the factors that make a case personal – the factors that engage relatively emotional brain processes and typically lead to deontological intuitions – are morally irrelevant. He also assumes that the factors the brain responds to in the relatively impersonal cases – the factors that engage reasoning capacities and yield consequentialist intuitions – are morally relevant. But these assumptions are themselves moral intuitions of precisely the kind that the argument is supposed to challenge.

Deontologists could turn the tables by claiming that the factors their intuitions respond to are the morally relevant ones. This disagreement about which aspects of a case are morally relevant is precisely what’s at issue between consequentialists and deontologists. Most damning of all for the scientific attack on ethics, the neuroscience makes no contribution to the argument. The link between ‘personal’ cases and deontological intuitions, and ‘impersonal’ cases and consequentialist intuitions, does all the work.

Arguments from evolutionary psychology don’t support consequentialism either. Evolutionary pressures shape every region and process of the human brain, the deontological and the consequentialist alike. The scientific arguments against relying on moral intuitions in ethics turn out to be neither particularly scientific nor particularly good arguments.

Ironically, there are good arguments against the way philosophers use intuitions in ethics, but these come from philosophy. The first begins with the fact that different people often have different intuitions about the same case. What do we do then? Since disagreement is a possibility, why should we think intuitions track the truth? There are no easy answers to these questions. The problem is not merely that people disagree, but that their differing intuitions have the same authority. The most our intuitions can do, it seems, is tell us about ourselves and our own ways of thinking, not about the facts they’re supposedly ‘about’. The philosopher Stephen Stich at Rutgers calls this the ‘cognitive diversity’ objection.

Now, this might not be a problem for using moral intuitions if there were some independent way to tell when intuitions are correct. But there is no such way – and if there were, we wouldn’t need the intuitions in the first place. The philosopher Robert Cummins at the University of California, Davis calls this ‘the calibration objection’. If we had an answer key to moral cases and a good theory to prove that the key really did have the right answers, we could test particular intuitions – and maybe even the general reliability of whatever cognitive faculty we use to intuit – by seeing whether they line up with the key. But if we had a key and proof that it was correct, intuitions wouldn’t matter anymore. There would be no problem we’d need them to solve. In any case, we don’t have an independent answer key for any moral cases, let alone an explanation that would justify using moral intuitions to assess moral theories.

Perplexingly, the prevailing philosophical defence against these objections has been to deny that ethicists rely on moral intuitions at all. But as the philosopher Avner Baz at Tufts has shown, the cognitive diversity and calibration objections can be reformulated to challenge whatever it is these philosophers think they’re up to. The best hope for philosophers trying to defend this way of doing ethics would be to show, as T M Scanlon at Harvard has attempted, that our responses to cases are best understood as judgments, similar to those we make in mathematics and in ordinary life.

Need ethics stop until ethicists can make that argument? Hardly. Ethics can be done in many ways. Aristotle, for one, developed an ethical theory without appealing to intuitions. And at least since the ironic dialogues of Socrates, philosophers have practised ethics by meeting others where they are and trying to show them their own hypocrisies, doubts and uncertainties. This kind of ethics aims less at grasping foundational ethical truths than at what John Rawls called ‘proof from common ground’. And though this might seem limiting, I suspect the task it leaves us is greater than it at first appears.