I spent a lot of time in the dark in graduate school. Not just because I was learning the field of quantum optics – where we usually deal with one particle of light or photon at a time – but because my research used my own eyes as a measurement tool. I was studying how humans perceive the smallest amounts of light, and I was the first test subject every time.

I conducted these experiments in a closet-sized room on the eighth floor of the psychology department at the University of Illinois, working alongside my graduate advisor, Paul Kwiat, and psychologist Ranxiao Frances Wang. The space was equipped with special blackout curtains and a sealed door to achieve total darkness. For six years, I spent countless hours in that room, sitting in an uncomfortable chair with my head supported in a chin rest, focusing on dim, red crosshairs, and waiting for tiny flashes delivered by the most precise light source ever built for human vision research. My goal was to quantify how I (and other volunteer observers) perceived flashes of light from a few hundred photons down to just one photon.

As individual particles of light, photons belong to the world of quantum mechanics – a place that can seem totally unlike the Universe we know. Physics professors tell students with a straight face that an electron can be in two places at once (quantum superposition), or that a measurement on one photon can instantly affect another, far-away photon with no physical connection (quantum entanglement). Maybe we accept these incredible ideas so casually because we usually don’t have to integrate them into our daily existence. An electron can be in two places at once; a soccer ball cannot.

But photons are quantum particles that human beings can, in fact, directly perceive. Experiments with single photons could force the quantum world to become visible, and we don’t have to wait around – several tests are possible with today’s technology. The eye is a unique biological measurement device, and deploying it opens up exciting areas of research where we truly don’t know what we might find. Studying what we see when photons are in a superposition state could contribute to our understanding of the boundary between the quantum and classical worlds, while a human observer might even participate in a test of the strangest consequences of quantum entanglement.

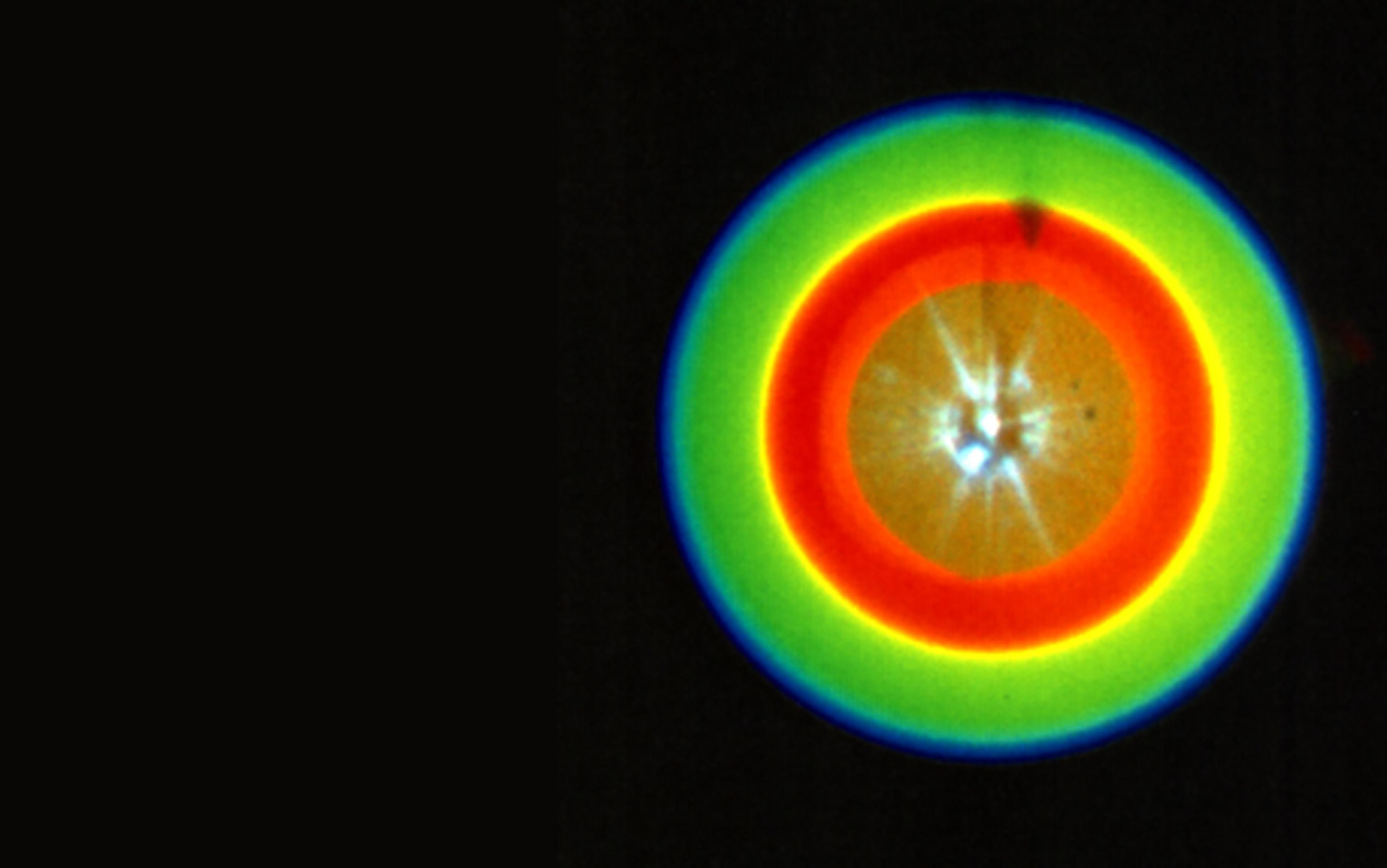

The human visual system works surprisingly well as a quantum detector. It’s a web of nerves and organs, from the eyeball to the brain, that turns light into the images we perceive. Humans and our vertebrate relatives have two primary types of living light detectors: rod cells and cone cells. These photoreceptor cells are located in the retina, the light-sensitive layer at the back of the eyeball. The cone cells provide colour vision, but they require bright light to work well. The rod cells see only in black-and-white, but they are tuned for night vision, and become most sensitive after about half an hour in darkness.

Rod cells are so sensitive that they can be activated by a single photon. One photon of visible light carries only a few electron-volts of energy. (Even a flying mosquito has tens of billions of electron-volts of kinetic energy.) A cascading chain reaction and feedback loop inside the rod cell amplifies this tiny signal into a measurable electrical response, the language of the neurons.

We know that rods are single-photon detectors because the electrical response of a rod cell to a single photon has been measured in the lab. What remained unknown until recently was whether these tiny signals make it through to the rest of the visual system and cause an observer to see anything, or whether they’re filtered out as noise or otherwise lost. This was a difficult question to answer because the right tools didn’t exist. The light emitted from everything from the Sun to neon lights is a random stream of photons, like raindrops falling from the sky. There’s no way to predict exactly when the next photon will come, or exactly how many photons will arrive in any time interval. No matter how dim the light, this fact makes it impossible to be sure that a human observer is really seeing just one photon – she could be seeing two or three instead.

There are now two possible experiments that could bring quantum weirdness into the realm of human perception

Over the past 75 years or so, researchers have come up with clever ways to try to get around the random photon problem. But in the late 1980s, a new field called quantum optics developed a revolutionary tool: the single-photon source. This was a new kind of light that the world had never seen before, and it gave researchers the ability to produce exactly one photon at a time. Instead of a rainstorm, we now had an eyedropper.

Today there are many recipes for making single photons, including trapped atoms, quantum dots, and defects in diamond crystals. My favourite recipe, which I learned as a graduate student, is called spontaneous parametric downconversion. Step one: take a laser and shine it on a beta-barium borate crystal. Inside the crystal, laser photons sometimes spontaneously split apart into two daughter photons. The newborn pair of daughter photons comes out the other end of the crystal, making a Y shape. Step two: take one of these daughter photons and send it to a single-photon detector, which ‘clicks’ whenever it detects a photon. Because the daughters are always created in pairs, this click announces that exactly one photon is now present on the other side of the Y, ready to be used in an experiment.

There’s one more important trick to studying single-photon vision. Just sending a single photon to an observer and asking: ‘Did you see it?’ is a flawed experimental design, because humans find this question difficult to answer objectively. We don’t like to say ‘yes’ unless we’re sure, but it’s hard to be sure about such a tiny signal. Noise in the visual system – which can produce phantom flashes even in total darkness – also adds to the confusion. A better strategy is to ask the observer to choose between two alternatives. In our experiments, we randomly choose whether to send a photon to the left or the right side of the observer’s eye, and in each trial they are asked: ‘Left or right?’ If the observer can answer that question better than random guessing (which would give at most 50 per cent accuracy), we know they are seeing something. This is called a forced-choice experimental design, and it’s common in psychology experiments.

In 2016, a Vienna-based research group led by the physicist Alipasha Vaziri from the Rockefeller University in New York used a similar experiment to show that human observers were able to respond to a forced choice with single photons better than random guessing, providing the best evidence so far that humans really can see just one photon. Using a single-photon source based on spontaneous parametric downconversion, and a forced-choice experimental design, there are now two possible experiments that could bring quantum weirdness into the realm of human perception: a test using superposition states, and what’s known as a ‘Bell test’ of non-locality using a human observer.

Superposition is a uniquely quantum concept. Quantum particles such as photons are described by the probability that a future measurement will find them in a particular location – so, before the measurement, the thinking is that they can be in two (or more!) places at once. This idea applies not just to particles’ locations, but to other properties, such as polarisation, which refers to the orientation of the plane along which they propagate in the form of waves. Measurement seems to make particles ‘collapse’ to one outcome or another, but no one knows exactly how or why the collapse happens.

The human visual system provides interesting new ways to investigate this problem. One simple but spooky test would be to determine whether humans perceive a difference between a photon in a superposition state and a photon in a definite location. Physicists have been interested in this question for years, and have proposed several approaches – but for the moment let’s consider the single-photon source described above, delivering a photon to either the left or the right of an observer’s eye.

First, we can deliver a photon in a superposition of the left and right positions – literally in two places at once – and ask an observer to report which side they believe the photon appeared on. To quantify any differences in perception between a superposition state and a random guess of left or right, the experiment will also include a control group of trials in which the photon really is just sent either to the left or the right.

Creating the superposition state is the easy part. We can split the photon into an equal superposition of the left and right positions using a polarising beam splitter, an optical component that both transmits and reflects light depending on its polarisation. (Many surfaces do this – even ordinary window glass both transmits and reflects, which is why you can see both the outdoors and your own reflection. Beam splitters are engineered to do it reliably, with a predetermined chance of transmission and reflection.)

Standard quantum mechanics predicts that a superposition of left and right shouldn’t look any different to an observer than a photon that’s randomly sent either to the left or to the right. Upon reaching the eye, the superposition of left and right will probably collapse to one side or the other so fast that it would be unnoticeable. But no one has tried such an experiment, so we don’t know for sure. Any statistically significant difference in the proportion of people reporting something to the left or right in a superposition trial would be unexpected – and could mean something is missing from quantum mechanics as we know it. The observer could also be asked to record their subjective experience of viewing a superposition state, compared with the random mixture. Again, according to standard quantum mechanics, we don’t expect to see any difference – but if we did, it could point to new physics and a better understanding of the quantum measurement problem.

If humans can see single photons, an observer can play a direct role in a test of local realism

Human observers could also participate in a test of the other defining concept of quantum mechanics: entanglement. Entangled particles share a quantum state, and behave as if they are joined together, no matter how far they are separated.

Bell tests, named for the Northern Irish physicist John S Bell, are a category of experiments proving that quantum entanglement violates some of our natural ideas about reality. In a Bell test, measurements on pairs of entangled particles show results that can’t be explained by any theory that obeys the principle of local realism. Local realism is a pair of seemingly obvious assumptions. First is locality: things that are far apart can’t affect each other faster than a signal could travel between them (and the theory of relativity tells us that the speed limit is the speed of light). Second is realism: things in the physical world have definite properties at all times, even if they aren’t measured and don’t interact with anything else.

The concept of a Bell test is that two particles interact with each other and become entangled, then we separate them and make measurements for each one. We take at least two kinds of measurements – say, polarisation measurements in two different directions – and we decide which ones to make at random, so the two particles can’t ‘agree’ on the outcomes ahead of time. (This might sound like a bizarre conspiracy theory, but when it comes to the strange experimental consequences of entanglement, it’s important to rule out every alternative explanation.) The experiment is repeated many times with new pairs of particles to build up a statistical result. Local realism sets a strict mathematical limit on how much the results between two particles should be correlated if they’re not connected in some strange way. In dozens of Bell tests, the limit has been broken, proving that quantum mechanics does not obey either locality, realism, or both.

Entangled photons are usually the particle of choice for Bell tests, and the local-realism-violating measurements are made using electronic single-photon detectors. But if humans can see single photons, an observer could replace one of these detectors, playing a direct role in a test of local realism.

Conveniently, spontaneous parametric downconversion can also be used to produce entangled photons. A different kind of experiment could use pairs of photons entangled in their polarisation. The experiment will be designed so that one photon goes to the observer when a certain outcome of a certain type of polarisation measurement occurs, while all other measurements will be made by single-photon detectors, at least in a first experiment. The observer’s job will be to take note of how often that measurement outcome occurs, and the number they observe will contribute to the correlation calculation that measures whether or not local realism has been violated.

But the observer will likely only be lucky enough to notice their photon in a small proportion of the experimental trials, so they would never measure the true frequency of that measurement outcome. Like a single-photon vision test, the experiment will be carefully designed to eliminate bias and help the observer be as objective as possible. A forced-choice design is the secret ingredient again. We will randomly choose whether to send the entangled photon to the left or right side of the observer’s eye, and at the same time send a second, non-entangled control photon to the opposite side with probability equal to the maximum expected frequency of the measurement outcome that would not violate local realism. In each trial, the observer will decide whether they saw anything on the left side and the right side – so they can respond left, right, or both. If the observer chooses the side with the entangled photon as frequently or more often than they choose the control side, the outcome violates local realism.

Why do these experiments? Beyond the ‘far out’ factor, there are serious scientific reasons. Exactly why and how superposition states collapse to definite outcomes is still one of the great mysteries of physics today. Testing quantum mechanics with a new, unique, ready-to-hand measurement apparatus – the human visual system – could rule out or provide evidence for certain theories. In particular, a class of theories called macrorealism proposes that there’s an undiscovered physical process that always causes the superposition states of large objects (such as eyeballs and cats) to collapse very quickly. This would mean that large superpositions are actually impossible, not just very unlikely due to disturbances from interactions with the environment. The Nobel prizewinning physicist Anthony Leggett at the University of Illinois has been pushing for experimental tests of such theories. In particular, if superposition experiments using the human visual system showed a clear divergence from standard quantum mechanics, it could be evidence that something like macrorealism is at work.

Bell tests are also still an active area of research. In 2015, all the major Bell-test loopholes – experimental technicalities that could have allowed local realism to persist, however unlikely – were finally closed. Now researchers have been proposing and carrying out a variety of more exotic Bell tests, trying to push the limits of entanglement and nonlocality. In 2017, researchers led by David Kaiser of the Massachusetts Institute of Technology and Anton Zeilinger of the University of Vienna performed a ‘cosmic Bell test’: they used light from distant stars to trigger the measurement settings, in an attempt to prove that predetermined correlations between entangled particles (which could open a loophole for local realism to persist) would have to extend hundreds of years into the past. An international collaboration called the BIG Bell Test used random choices from more than 100,000 human participants to decide the measurement settings for a Bell test in 2016. A Bell test with a human observer would be a fascinating addition to these experiments.

These days, I spend less time in blackened rooms than I once did. At Los Alamos National Laboratory in New Mexico, I work on ways to use single photons (detected by electronics, not the eye) to create space ‘licence plates’ for satellites in orbit around the Earth. But when this next generation of experiments makes quantum mechanics visible, I plan to retreat back into the dark and fire up my own single-photon detectors again.