Most people assume that torturing another human being is something only a minority are capable of doing. Waterboarding requires the use of physical restraints – perhaps only after a physical struggle – unless the captive willingly submits to the process. Slapping or hitting another person, imposing extremes of temperature, electrocuting them, requires active others who must grapple with, and perhaps subdue, the captive, imposing levels of physical contact that violate all norms of interpersonal interaction.

Torturing someone is not easy, and subjecting a fellow human being to torture is stressful for all but the most psychopathic. In None of Us Were Like This Before (2010), the journalist Joshua Phillips recounts the stories of American soldiers in Iraq who turned to prisoner abuse, torment and torture. Once removed from the theatre of war and the camaraderie of the battalion, intense, enduring and disabling guilt, post-traumatic stress disorder, and substance abuse follow. Suicide is not uncommon.

What would it take for an ordinary person to torture someone else – perhaps electrocute them, even to the point of (apparent) death? In possibly the most famous experiments in social psychology, the late Stanley Milgram of Yale University investigated the conditions under which ordinary people would be willing to obey instructions from an authority figure to electrocute another person. The story of these experiments has often been told, but it is worth describing them again because they continue, more than 40 years on and many successful replications later, to retain their capacity to shock the conscience and illustrate how humans will bend to the demands of authority.

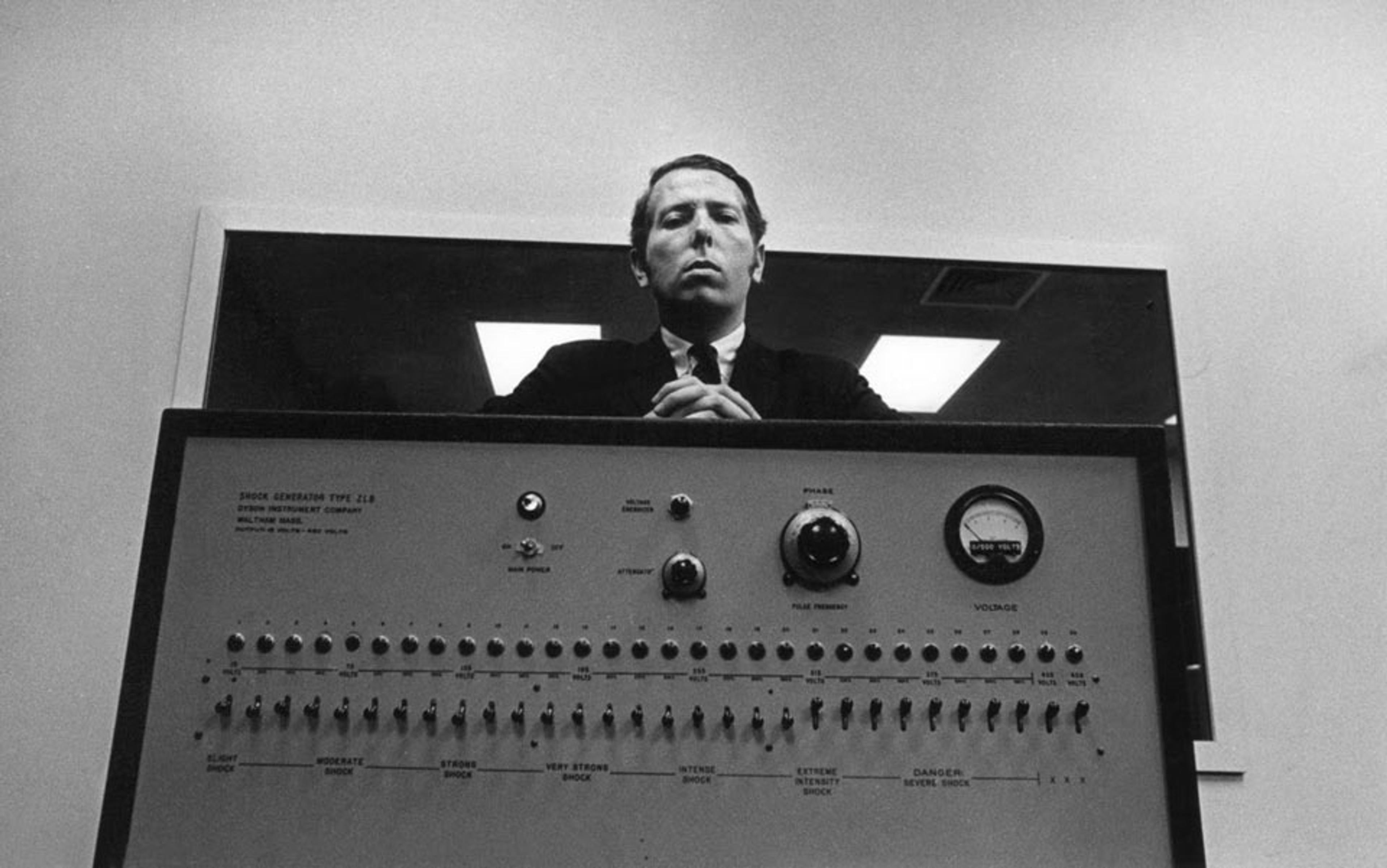

Milgram invited members of the public by advertisement to come to his laboratory to investigate the effects of punishment on learning and memory. Subjects were introduced to another participant and told this person was going to be electrocuted whenever they misremembered words they were meant to learn. This other person – in fact, an actor who did not actually experience any pain or discomfort – was brought to a room and hooked up to what looked like a set of electric shock pads. The actor was in communication via a two-way speaker with the subject, who was seated in a second room in front of a large box featuring a dial said to be capable of delivering electric shocks from 0 to 450 volts. At various points around the dials, different dangers associated with particular shock levels were indicated. The experimenter (the authority figure) was a scientist in a white coat, who gave instructions to the unwitting subject; that individual would apply the electric shock whenever the actor made an error, and the apparent distress of the actor would increase as the shock level increased.

At the start of these experiments, Milgram had his experimental protocols reviewed. It was generally concluded that the vast majority of people would not go anywhere near the highest levels of shock: that they would desist from shocking the actor long before the maximum point on the dial was reached. However, Milgram found that about two-thirds of test participants progressed all the way to the maximum shock. If the subject indicated any worries, the experimenter would use verbal statements such as: ‘The experiment requires that you continue.’ Simple verbal prompts and the presence of an authority figure in a lab context was enough to induce behaviour that, if seen in the outside world, would be regarded as evidence of extreme psychopathy and lack of empathy.

What is the lesson to be drawn from these experiments? If an authority gives the go‑ahead, humans are willing to visit seeming extremes of pain on another person for trivial reasons, namely, an apparent inability to recall words from a list.

Milgram’s results were remarkable and led to an explosion of research on the psychology of obedience. There have been 18 successful replications of his original study between 1968 and 1985, and several more recent replications, with a host of different variables worth examining in detail.

In 2010, for instance, the psychologists Michaël Dambrun and Elise Vatiné at Blaise Pascal University in France used no deception; participants were told that the learner was an actor feigning being shocked. Yet several results stand out: participants reported less anxiety and distress when the learner was of North African origin. And participants who exhibited higher levels of right-wing authoritarianism and who showed higher levels of anger were more likely to show high levels of obedience as well.

A further replication of Milgram’s work was undertaken in 2014 by Laurent Bègue at the University of Grenoble and colleagues, who transposed the Milgram paradigm to a television game-show setting. Here, three conditions were tested: the ‘standard Milgram’ condition using the voice of authority; a ‘social support’ condition, in which an accomplice intervenes to say that the show must be stopped because it is immoral; and a ‘host-withdrawal’ condition, in which the host departs, leaving participants to decide for themselves whether to continue. There was 81 per cent obedience in the standard condition, but only 28 per cent obedience in the host-withdrawal condition.

The team further found two personality constructs moderately associated with obedience: agreeableness and conscientiousness. These are dispositions that might indeed be necessary for willing or unwilling participation in a programme of coercive interrogation or torture. Interestingly, individuals of a more rebellious disposition (eg those who’ve been on strike) tended to administer lower-intensity shocks. Of course, rebels are not usually selected by institutions to operate sensitive programmes: Edward Snowden is the exception, not the rule.

People can override their moral compass when an authority figure is present and institutional circumstances demand it

Milgram’s work and subsequent replications are not the only studies to reveal some of the potential psychological mechanisms of the torturer. In the early 1970s, the psychologist Philip Zimbardo conducted an experiment to investigate what would happen if you took people – in this case, psychology students – randomly, divided them into ‘prisoners’ and ‘prison guards’, and then housed them in a ‘prison’ in the basement of the psychology department at Stanford University. Again, remarkable effects on behaviour were observed. Those designated prison guards became, in many cases, very authoritarian, and their prisoners became passive.

The experiment, which was supposed to last two weeks, had to be terminated after six days. The prison guards became abusive in certain instances, and began using wooden batons as symbols of status. They adopted mirrored sunglasses and clothing that simulated the clothes of a prison guard. The prisoners, by contrast, were fitted in prison clothing, called by their numbers not their names, and wore ankle chains. Guards became sadistic in about one-third of cases. They harassed the prisoners, imposed protracted exercise as punishments on them, refused to allow them access to toilets, and would remove their mattresses. These prisoners were, until a few days previously, fellow students and not guilty of any criminal offence.

The scenario gave rise to what Zimbardo referred to as deindividualisation, in which people might define themselves with respect to their roles, not to themselves or their ethical standards as persons. These experiments emphasise the importance of institutional context as a driver for individual behaviour, and the extent to which an institutional context can cause people to override their individual and normal predispositions.

The combined story that emerges from Milgram’s obedience experiments and Zimbardo’s prison experiments challenges naive psychological views of human nature. Such views might suggest that people have an internal moral compass and a set of moral attitudes, and that these will drive behaviour, almost irrespective of circumstance. The emerging position, however, is much more complex. Individuals might have their own moral compass, but they are capable of overriding it and inflicting severe punishments on others when an authority figure is present and institutional circumstances demand it.

Anecdotally, it’s clear that many people who have engaged in torturing others show great distress over what they have done, and some, if not many, pay a high psychological price. Why is this?

Humans are empathic beings. With certain exceptions, we are capable of simulating the internal states that other humans experience; imposing pain or stress on another human comes with a psychological cost to ourselves.

Those of us who are not psychopaths, have not been deindividuated, and are not acting on the instructions of a higher authority do, indeed, have a substantial capacity for sharing the experiences of another person – for empathy. Over the past 15 to 20 years, neuroscientists have made substantial strides in understanding the brain systems that are involved in empathy. What is the difference, for example, between experiencing pain yourself and watching pain in another human? What happens in our brains when we see another in pain or distress, especially somebody with whom we have a close relationship?

In what has to be one of the most remarkable findings in brain imaging, it has now been shown repeatedly that when we see another person in pain, we experience activations in our pain matrix that correspond to the activations that would occur if we were experiencing the same painful stimuli (without the sensory input and motor output, because we have not directly experienced an assault to the surface of the body). This core response accounts for the sudden wincing shock and stress we feel when we see someone sustain an injury.

during states of empathy, people do not experience a merging of the self with the psychological state of another

In 2006, Philip Jackson at Laval University in Quebec and colleagues examined the mechanisms underlying how one feels one’s own pain versus how one feels about the pain of another person. The team started from the observation that pain in others often provokes prosocial behaviour such as comforting, which occurs naturally but in a situation of torture such prosocial behaviours would have to be actively inhibited. The researchers compared common painful situations such as a finger being caught in a door with pictures of artificial limbs being trapped in door hinges. The subjects were asked to imagine experiencing these situations from the point of view of the self, from the point of view of another person, or from the point of view of an artificial limb. They found that the pain matrix is activated both for self- and for other-oriented imagining. But certain activated brain areas also discriminated between self and others, in particular, the secondary somatosensory cortex, the anterior cingulate cortex, and the insula.

Other experiments have focused on the issue of compassion. In 2007 Miiamaaria Saarela at the Helsinki University of Technology and colleagues examined subjects’ judgments about the intensity of suffering in chronic‑pain patients who volunteered to have their pain provoked and thus intensified. They found that the activation of a given observer’s brain was dependent on their estimate of the intensity of the pain in another’s face, and also highly correlated with one’s own self-rated empathy.

Such studies show that people are very capable of engaging in empathy for the pain of another; that the mechanisms by which they do so revolve around brain mechanisms that are activated when one experiences pain too; but that additional brain systems are recruited to discriminate between the experience of one’s own pain and the experience of seeing another’s pain. In other words, during states of empathy, people do not experience a merging of the self with the psychological state of another. We continue to experience a boundary between self and other.

This leaves us with the cognitive space for the rational evaluation of alternatives that are not possible when one is experiencing the actual stressor. No matter how great our capacity to identify with others, there are elements missing because we are not directly experiencing the sensory and motor components of a stressor. We lack the capacity to fully feel our way into the state of another person who is being subjected to predator stress, and experiencing an extreme loss of control over his or her own bodily integrity. This space is known as the empathy gap.

The empathy gap was explored in a brilliant set of experiments by Loran Nordgren at Northwestern University in Illinois and colleagues in 2011 on what constitutes torture.

The first experiment concerns the effects of solitary confinement. The researchers induced social pain – what individuals feel when they are excluded from participating in a social activity or when their capacity to engage in social affiliation is blunted by others. They used an online ball-toss game, ostensibly with two other players but in reality entirely preprogrammed. Participants were enrolled in one of three conditions. In the no-pain condition, the ball was tossed to them on one-third of the occasions, corresponding to full engagement and full equality in the game. In the social-exclusion/social-pain condition, the ball was tossed to them only 10 per cent of the time – they were ostensibly excluded from fully participating in the game by what they believed to be the other two players, and thus would have felt the pain of social rejection. Control subjects did not play the game at all.

Then the researchers led everyone through a second study that was apparently unrelated to the first. Subjects were given a description of solitary confinement practices in US jails and asked to estimate the severity of pain that these practices induce. As predicted by the authors, the social-pain group perceived solitary confinement to be more severe than the no-pain and control groups did, and the social‑pain group was nearly twice as likely to oppose extended solitary confinement in US jails.

University professors arguing in favor of torture have not actually used the rack to elicit students’ memories of forgotten lectures

The second experiment used participants’ own tiredness to see whether it affected their judgments about sleep deprivation as an interrogation tactic. Participants were a group of part-time MBA students, holding down full-time employment and required to attend classes from 6pm to 9pm. A group of this type offers a great advantage. You can manipulate, within the one group, the extent of people’s fatigue by having them measure their own level at the start of the three-hour class and then again at the end of the class. As you’d expect, subjects are very tired after working a full day and then attending a demanding class in evening school. Half the students were asked to judge the severity of sleep deprivation as a tool for interrogation at the start of the class. The other half were asked to judge it at the end of the class, after their own fatigue was at a very high level. The researchers found that the fatigued group regarded sleep deprivation as a much more painful technique than the non-fatigued group did.

In a third experiment, participants placed their non-dominant arm in iced water while completing a questionnaire regarding the severity of the pain and the ethics of using cold as a form of torture. Control subjects put their arm in room-temperature water while they completed the questionnaire. A third group placed an arm in cold water for 10 minutes while completing an irrelevant task and then completed the questionnaire without having their arm in water. Actually experiencing cold had a striking impact on subjects’ judgment of the painfulness of cold and its use as a tactic for obtaining information. In short, the researchers found the empathy gap. Exposure to cold 10 minutes before answering the questions left an empathy gap too, challenging the notion that people who have experienced the pain of interrogation in the past – for example, interrogators exposed to pain during training – are in a better position than others to assess the ethics of their tactics.

In the final experiment, one group of subjects had to stand outdoors without a jacket for three minutes, at just above freezing point. A second group put a hand in warm water, and a third in ice-cold water. Each group was then required to judge a vignette about cold punishment at a private school. The researchers found that the cold-weather and iced-water groups gave higher estimates of the pain and were much less likely to support cold manipulations as a form of punishment.

These experiments all serve to highlight a central issue: proponents of coercive interrogation don’t generally have personal experience of torture. University professors who argue in favour of torture have not actually used the rack to enhance students’ ability to elicit forgotten lectures. Those who talk about torture do not have the responsibility for conducting the torture itself. Judges will not leave the safe confines of their court to personally waterboard a captive. Politicians will not leave the safe confines of their legislative offices to keep a captive awake for days at a time.

The Torture Memos, created to advise the CIA and the US president on so-called enhanced torture techniques, includes an extended discussion of waterboarding and shows just how vast the empathy gap can become. The memos note that the waterboard produces the involuntary perception of drowning, and that the procedure may be repeated but is to be limited to 20 minutes in any one application. One can do all sorts of basic arithmetic to calculate how much water, at what flow rate, needs to be applied to the face of a person to induce the experience of drowning. The water might be applied from a hose; it might be applied from a jug; it might be applied from a bottle – many possibilities are available, given human ingenuity and the lack of response that might occur during these intermittent periods of the ‘misperception of drowning’, as the Torture Memos so delicately put it.

However, one point is not drawn out in the memos: that the detainee is being subjected to the sensation of drowning for 20 minutes. There is literature on the near-death experience of drowning, from which we know it happens quickly, that the person loses consciousness and then either dies or is rescued and recovered. Here, no such relief is possible. A person is subjected for 20 minutes to an extended, reflexive near-death experience, one over which they have no control and in the course of which they are expected also to engage in the guided retrieval of specific items of information from their long-term memories. Yet we subsequently read in the memos that ‘even if one were to parse the statute more finely to treat “suffering” as a distinct concept, the waterboard could not be said to inflict severe suffering’.

Here we see a profound failure of imagination and empathy: to be subjected to a reflexive near-death experience for 20 minutes in one session, knowing that multiple sessions will occur, is by any reasonable person’s standards a prolonged period of suffering. The position being adopted is entirely one of a third party focused on its own actions. In this context, waterboarding is clearly a ‘controlled acute episode’ imposed by the person who is doing the waterboarding. However, for the person on whom it is being imposed, waterboarding will not be a ‘controlled acute episode’; it will be a near-death experience in which the individual is suffocated without the possibility of blackout or death for 20 minutes. There is a deliberate confusion here of what the person who is imposing waterboarding feels with what the person being waterboarded actually feels.

Can we chart this kind of confusion in the brain? In a 2006 study, John King at University College London and colleagues used a video game in which participants either shot a humanoid alien assailant, gave aid to a human in the form of a bandage, shot the wounded human, or gave aid to the attacking alien. The game included a virtual, three-dimensional environment consisting of 120 identical square rooms. Each room contained either a human casualty or the alien assailant. The participant had to pick up the tool at the door and use it appropriately. This tool was either a bandage to give aid or a gun that could be shot at whomever was in the room. Participants rated the shooting of the human casualty as relatively disturbing but shooting the alien assailant as not disturbing. However, assisting the wounded human was seen as approximately as disturbing as shooting the alien assailant. The overall pattern of the data was surprising: the same neural circuit (amygdala: medial prefrontal cortex) was activated during context-appropriate behaviour, whether helping the wounded human or shooting the alien assailant. This suggests that, for the brain at least, there is a common origin for the expression of appropriate behaviour, depending on the context.

This finding leads to a more subtle view than we might originally have suspected: that we have a system in the brain with the specific role of understanding the behavioural context within which we find ourselves and then behaving appropriately to that context. Here, the context is simple: to give aid to a fellow human and to defend oneself against the aggressive attack of a non‑human assailant are both appropriate.

It is inevitable a relationship will develop over time between the interrogator and the person being interrogated. The question is the extent to which this relationship is desirable or undesirable. It could be prevented by potentially using interrogators who have low empathic abilities or by constantly rotating interrogators, so that they do not build up a relationship with the person who is being interrogated. The problem here, of course, is that this strategy misses what is vital about human interaction, namely, the enduring predisposition that humans have for affiliation to each other and our capacity to engage with others as human beings and to like them as individuals. And this in turn will diminish the effectiveness of the interrogation. It will even make it easier for the person being interrogated to game the interviewer, for example, giving lots of differing stories and answers to the questions. In turn, this makes detecting reliable information much harder. And significantly, the most empathic interrogators are also the most vulnerable to terrible psychic damage after the fact. In his book Pay Any Price (2014), The New York Times Magazine correspondent James Risen describes torturers as ‘shell-shocked, dehumanised. They are covered in shame and guilt… They are suffering moral injury’.

A natural question is why this moral and psychic injury arises in soldiers who, after all, have the job of killing others. One response might be that the training, ethos and honour code of the solider is to kill those who might kill him. By contrast, a deliberate assault upon the defenceless (as occurs during torture) violates everything that a soldier is ordinarily called upon to do. Egregious violations of such rules and expectations give rise to expressions of disgust, perhaps in this case, principally directed at the self.

This might explain why, when torture is institutionalised, it becomes the possession of a self-regarding, self-supporting, self-perpetuating and self-selecting group, housed in secret ministries and secret police forces. Under these conditions, social supports and rewards are available to buffer the extremes of behaviour that emerge, and the acts are perpetrated away from public view. When torture happens in a democracy, there is no secret society of fellow torturers from whom to draw succor, social support, and reward. Engaging in physical and emotional assaults upon the defenceless and eliciting worthless confessions and dubious intelligence is a degrading, humiliating, and pointless experience. The units of psychological distance here can be measured down the chain of command, from the decision to torture being a ‘no-brainer’ for those at the apex to ‘losing your soul’ for those on the ground.

Adapted from Why Torture Doesn’t Work: The Neuroscience of Interrogation by Shane O’Mara. Published by Harvard University Press. Copyright © 2015 by the President and Fellows of Harvard College. Used by permission. All rights reserved.