Throughout an unusually sunny Fall in 1970, hundreds of students and faculty at Syracuse University sat one at a time before a printing computer terminal (similar to an electric typewriter) connected to an IBM 360 mainframe located across campus in New York state. Almost none of them had ever used a computer before, let alone a computer-based information retrieval system. Their hands trembled as they touched the keyboard; several later reported that they had been afraid of breaking the entire system as they typed.

The participants were performing their first online searches, entering carefully chosen words to find relevant psychology abstracts in a brand-new database. They typed one key term or instruction per line, like ‘Motivation’ in line 1, ‘Esteem’ in line 2, and ‘L1 and L2’ in line 3 in order to search for papers that included both terms. After running the query, the terminal produced a printout indicating how many documents matched each search; users could then narrow down or expand that search before generating a list of article citations. Many users burst into laughter upon seeing the response from a computer so far away.

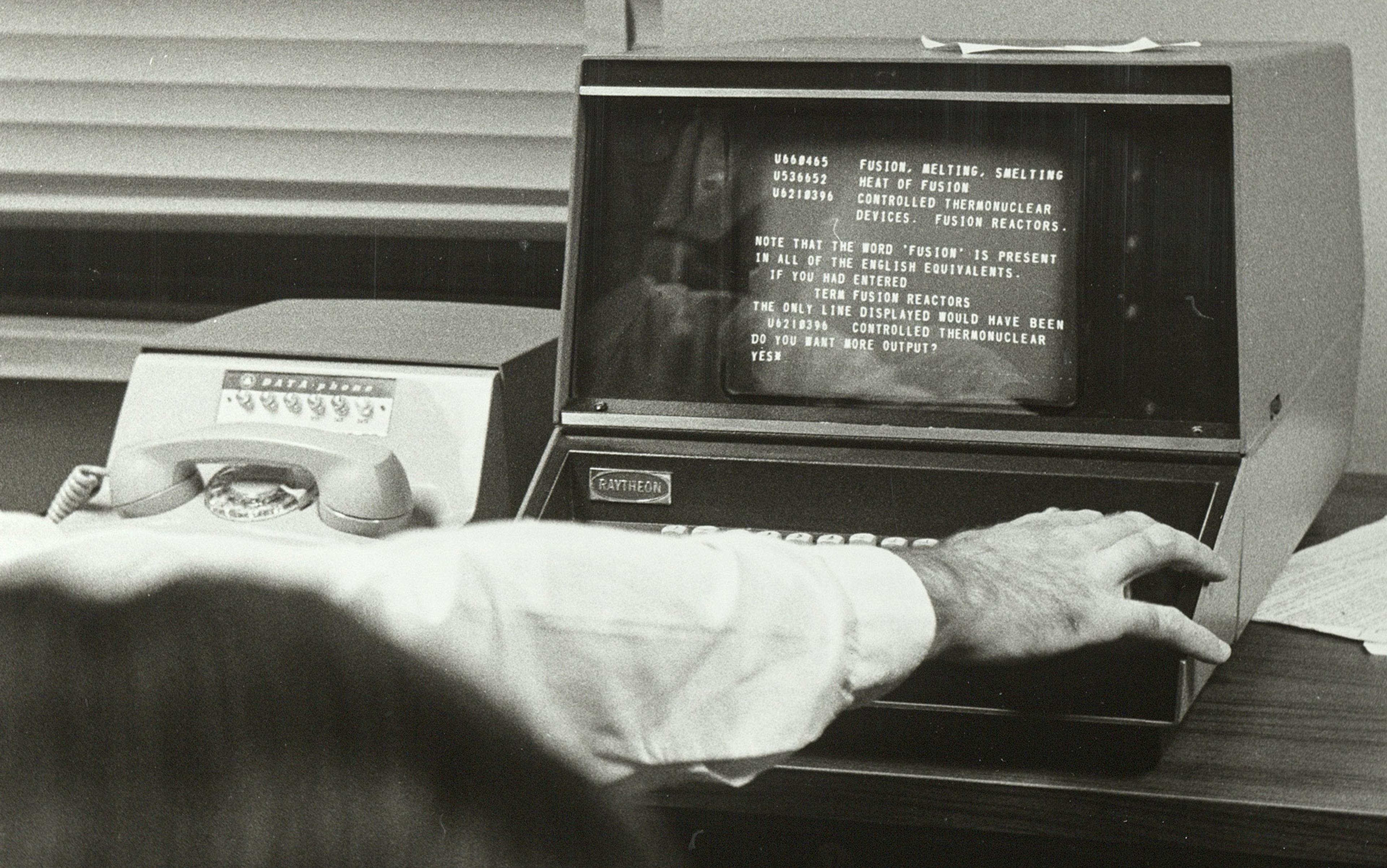

The IBM 360 mainframe computing system with printing terminal. Courtesy IBM

As part of a telephone survey afterwards, participants were asked to provide two or three words describing the experience. Of the 78 total words provided, 21 were the same adjective: ‘frustrating’. Participants had trouble signing on to the system and experienced unpredictable failures, ‘irrelevant output’ and, most of all, not knowing ‘what words to use in a search’. Yet they also found the system intriguing and exciting (‘fun’, ‘thorough’, ‘I dig computers’), and 94 per cent said they would use SUPARS (the Syracuse University Psychological Abstracts Retrieval Service) again if it were available. Several offered to keep the experiment running past its deadline by asking their departments to contribute funding to the project.

This group of academic guinea pigs, mostly graduate students in education, psychology and librarianship, were part of a radical online search experiment run by the Syracuse University School of Library Science. SUPARS was one of many ambitious information-retrieval studies that took place between the late 1960s and mid-1970s on US university campuses. A number of factors led to the surge in this research. Developments in computer-processing capability for speed and storage had allowed academic databases and catalogues to be digitised and moved online. Computer terminals were newly modular and could be placed around campuses for decentralised access to mainframes. And military and industry funding for computer-based research was more abundant than it had ever been. Given the opportunity, academic librarians took advantage of the chance to explore this expensive new technology. In turn, universities offered unclassified environments for collaborations with corporate technology firms and military groups; SUPARS was sponsored by the Rome Air Development Center, the laboratory arm of the US Air Force.

It’s easy to see why librarians of the 1970s set out to revolutionise search. Work across the academy was expanding to such a degree that, soon, there would not be enough human librarians to support all of it. Yet, to get the information they needed, researchers would face a time-consuming, physically involved process that required librarian intervention. While academic researchers could browse new issues of journals in their field, for a focused search of all that had come before they still had to consult with a reference librarian to look up the correct Library of Congress subject headings within a multivolume manual. Armed with a set of subject headings, the researcher would then search across the library catalogue for books and in citation indexes for journal articles, including subscription databases such as the Science Citation Index as well as hand-built bibliographies created by their university’s subject librarians. Finally, they would physically track down the correct books and bound periodicals that included articles they thought might be relevant – if the volumes happened to be on the library shelves.

It’s no wonder that SUPARS participants found the system compelling, despite its limitations. And given how familiar university librarians were with the challenges of search, it makes sense that the system they designed bypassed subject headings and citation indexes. What’s more surprising is that, of all the online search experiments that took place during this period – including commercially focused search systems like Lockheed’s Dialog, which has since become an enterprise product – SUPARS mimicked contemporary web search more closely than any other, prefiguring several primary features of web-search protocols we rely on more than 50 years later.

SUPARS and other largely forgotten systems were the forerunners of the contemporary search engines we have today. While the popular history of the internet valorises Silicon Valley coders – or, sometimes, the former US vice president Al Gore – many of the original concepts for search emerged from library scientists focused on the accessibility of documents in time and space. Working with research and development funding from the military and industry, their advances can be seen everywhere in the current online information landscape – from general approaches to ingesting and indexing full-text documents, to free-text searching and a sophisticated algorithm utilising previous saved searches of others, a foundational building block for contemporary query expansion and autocomplete. Indeed, these and many other approaches developed by campus pioneers are still used by the multibillion-dollar businesses of web search and commercial library databases from Google to WorldCat today.

.jpg?width=3840&quality=75&format=auto)

Pauline Atherton Cochrane (centre) with colleagues working in the Syracuse University Libraries on SUPARS. Photo courtesy Syracuse Libraries Special Collections

SUPARS was designed by a librarian named Pauline Atherton (who goes by the name of Pauline Atherton Cochrane today). In 1960, aged 30 and early in her library career, she had been the cross-reference editor of that year’s revision of the World Book Encyclopedia, ensuring thorough and accurate cross-linking between different articles. By 1966, she was working at the Syracuse University libraries and in the library school, where in 1968 she demonstrated the first use of an online decimal classification file to aid in search (AUDACIOUS). That same year, she established the first computer-based teaching lab that integrated online search into regular classroom teaching at the library school (LEEP). (In the context of the world before the internet, ‘online’ meant establishing a networked, real-time connection between a mainframe computer and some other remote device, such as a terminal.)

The following year, in 1969, Atherton designed SUPARS with her co-investigator, Jeffrey Katzer, another library science professor at Syracuse. The main goal of the SUPARS project was to provide online searching at a massive scale in order to learn as much as possible about how users searched online, how they felt about it, and what they needed to search better. To do so, the team set up a searchable corpus of scholarly content made available to the entire campus; more than 35,000 recent entries from the American Psychological Association’s Psychological Abstracts. Used for indexing and retrieval in the SUPARS system, this comprised the first database of significant size available online in an unclassified environment. While obviously nowhere near the size and scope of today’s web search, both the user group and the searchable content were enormous for the time.

Two decisions from Atherton and her team made SUPARS truly novel. First, they stripped away all subject headings from the entries in Psychological Abstracts and made all the words directly searchable, except for connectors such as ‘and’ and articles like ‘a’ or ‘the’. This made SUPARS the first system where extensive free text was available online for both searching and output. (They titled their final report ‘Free Text Retrieval Evaluation’.) Second, they saved every SUPARS search in a parallel database that could be queried alongside the abstracts themselves, making SUPARS the first experiment that allowed users to access and use previous searches to find alternative terms or approaches.

SUPARS prefigured web search by giving users the ability to search free text directly in the documents themselves

Each of these features would have been novel on their own but, to contextualise how ahead of its time the combination was, it’s worth looking at how web-search services operate today. Google, Bing and other search engines index web pages using two major components: crawlers search for new pages, and regularly recrawl already found pages; parsers analyse the content of pages, storing the resulting information, including all free text, in an internal database. When a user enters a search query, Google tries to match the words and phrases in the query to pages in its database and serve the most relevant results to the user.

In addition to the words that searchers enter themselves, contemporary web-search algorithms also take into account other terms closely related to those in the search query, including synonyms (such as a search for ‘bike’ returning results for ‘bicycle’ and ‘cycle’) and other directly related words.

Most search engines will also include words that were part of similar queries performed by others, which become part of the internal thesauri used to add search terms to a user’s query. This process of including related words, known as query expansion, significantly improves the relevance of records returned. Similarly, Google and other search engines also suggest additional search terms to users via autocomplete, creating predictions based on previous searches to help users quickly complete their queries.

SUPARS thus prefigured web search by giving users the ability to search free text directly in the documents themselves, and by allowing searchers to piggyback on search strategies used by others who came before. Meanwhile, SUPARS determined the utility of all these individual searches through analysis of its transaction log. After an initial pilot project, two sessions of SUPARS testing were run between October to December 1970 (SUPARS I) and November to December 1971 (SUPARS II). Atherton’s team concluded that free-text search was an efficient way to improve relevance (known as ‘recall’ in the scientists’ parlance) of search results – and might be just as effective as a search led by a research librarian of the human sort. What’s more, the ever-evolving vocabulary of a system continually adapting to human input and behaviour presented an upgrade over a system based on a fixed, ‘one-shot’ controlled vocabulary of search systems thus far. The SUPARS team had no way of knowing that AI-powered web-search algorithms would be doing this precise work a few decades later, but they clearly had a sense that this would be a new and effective way of continually updating search results.

In a 1972 letter to the editor of the Journal of the American Society for Information Science, Katzer described the reasoning behind providing a database of all previous search queries:

The purpose of this Search Data Base is to aid the user as he tries to formulate queries to the data base of documents (Psychological Abstracts.) Since SUPARS is currently using an unrestricted vocabulary, the output from the search data base could help the user discover other ways to attack his topic in the document data base: It will provide keywords used by other topic experts, along with a representation of their thought processes … [W]e think that this is a beginning in an area which has not been sufficiently explored: the use of user intelligence to augment all of the effort which has gone into machine intelligence.

It is tempting to depict Atherton’s team as utopian futurists, but the SUPARS experiment was not designed with a guiding vision like the open web in mind. It was specifically created for a future in which fewer librarians would be available to help researchers in person. Extending the collective intelligence of others was a practical solution, not an idealistic one.

Atherton’s group observed that, because the new computer terminal locations at Syracuse were ‘remote from a reference librarian or any other human specialists in the user’s interest area’, they would need an additional source of help, which could be found in ‘the human intelligence of all other users of the system’. The aggregate decisions of other researchers was only a substitute for a library expert, they wrote:

Ideally, a user would be able to talk with someone familiar with his interest area and be provided with a variety of words and other cues. The user could then develop or formulate a search inquiry to the system that had the specificity or exhaustiveness needed to maximise retrieval.

As they worked with the modular terminal on campus, the SUPARS team saw the future that was coming and what a world based on distributed, networked computing would lose: an ever-larger number of researchers were increasingly going to be working outside of the library, on their own, in need of support that librarians wouldn’t be able to provide. Atherton’s team wasn’t predicting a world where expert librarians wouldn’t be needed; they were preparing for a world where research would take place in many disparate locations, too far from a reference desk for them to be able to help.

The people credited as visionaries imagined a world where technology would improve human communication

The SUPARS experimenters also concluded that, while utilising the search terms of others was a promising alternative to subject-based search, it had real limitations. One of the final SUPARS recommendations was to continue developing controlled vocabulary, explaining that ‘a need continues to exist in interactive free-text searching for some form of user vocabulary or synonym control’. They came to this conclusion after seeing how frequently SUPARS participants stumbled into search vocabulary problems such as, in one of their examples, searching for ‘people’ instead of ‘humans’ and returning no results. The participants themselves missed the comprehensiveness of subject headings. In fact, as part of the SUPARS survey, they were asked if they preferred a free-text system or one in which the vocabulary was more controlled: 42 per cent preferred the free-text system, 36 per cent preferred the controlled vocabulary, and 12 per cent wanted both.

In this way, SUPARS is meaningful as both a design far ahead of its time and as a counterexample to established techno-utopian histories of the internet and the world wide web. The people credited as visionaries in this history almost always imagined a world where technology would improve human communication, intelligence and effectiveness absolutely.

For example, one of the most celebrated figures in this history is Joseph Carl Robnett ‘Lick’ Licklider, whose idea of a universal network directly inspired the invention of ARPANET, often described as ‘the first internet’. (Licklider was also deeply involved with similar 1960s and ’70s campus experiments for online search; he both funded and advised on several studies at MIT libraries that ran during the same period as SUPARS.)

In 1968, the year before SUPARS was designed, Licklider’s paper ‘The Computer as a Communication Device’ declared that: ‘In a few years, men will be able to communicate more effectively through a machine than face to face’ and described a rewarding, blissful society mediated by human computer interactions. Licklider predicted that ‘life will be happier for the on-line individual’ and that ‘communication will be more effective and productive, and therefore more enjoyable’. Licklider’s essay is typically both predictive and rosy for this futuristic genre about the potential of information technology.

Culture celebrates people like Licklider for being visionary in a positive vein. But, similarly, Atherton and the SUPARS research team should be celebrated for having seen and then designed for what the future would lose. Expanding our group of established internet visionaries to include people like Atherton, we see a more complex portrait of how different kinds of researchers envisioned the world to come. Where Licklider saw what we would gain from being able to communicate online with anyone in the world, Atherton’s group saw that we would lose expert intermediaries; they designed for this cost.

In 2022 and 2023, as the first generative AI search engines, including academic search engines such as Elicit and Consensus, were introduced to a wide set of users to both great excitement and scepticism, it is similarly useful to analyse what will be lost when researchers come to rely on these tools. When we can simply enter research questions to create instantaneous literature reviews, for example, there will not be merely a great positive leap forward. This new technology will create a lack of grounding and context, even as incredible new discoveries are made – a different loss than what Atherton saw, but similarly both intangible and deeply consequential. Being able to predict these consequences in advance, not mourning them as Luddites but actively considering how to help researchers overcome them, is a lesson we can learn from the SUPARS team.