Listen to this essay

47 minute listen

What is unique about human beings? What makes us especially successful, interesting or valuable in comparison with other creatures? This thing – whatever it is – has gone by many names over the centuries: the human condition, the human spirit or, more classically, the soul. Regardless of the name, there have always been those keen to explain away this uniqueness, perhaps arguing that we’re merely one species of animal among many. And on the other side, there have always been those eager to render it ineffable, often claiming it to be a spark of the divine.

The conflict between these extremes is taking a new form as glimmers of a soul appear in the latest wave of artificial intelligence – large language models (LLMs) like ChatGPT, Claude and Gemini. For every human ability these systems learn to mimic, we can find someone claiming they’re basically indistinguishable from us, and someone else arguing they’ll never really be like us. An exchange between the OpenAI CEO Sam Altman and the linguist Emily Bender is emblematic of this dynamic, with Bender publishing a paper arguing that LLMs are nothing but ‘stochastic parrots’, spewing predictable but meaningless words, and Altman Tweeting: ‘i am a stochastic parrot, and so r u’.

This isn’t the first machine culture war. In the 18th and 19th centuries, the Industrial Revolution reconfigured society, turning more and more people into cogs in the factory system. On one side were those who saw society itself as a machine to be rationalised. Thinkers such as Jeremy Bentham and Auguste Comte came to believe that how we live could be optimised and governed according to calculable laws. Human behaviour was something to be technically measured and managed. On the other side were those who championed the Romantic virtues of subjective feeling, individual genius and organic nature against the ascendancy of mechanism. Figures such as Samuel Taylor Coleridge and Karl Wilhelm Friedrich Schlegel argued that this clockwork model of society endangered the very qualities that make us human.

A stipple engraving representing mechanical philosophy, or 18th-century physics (1816) by John Chapman. Courtesy the Wellcome Collection

Romanticism eventually gave way to modernism, and then postmodernism, but its influence lingered on throughout the 20th century. Even after the Information Revolution transformed society through automation and rationalisation, the bastions of Romanticism – artistic inspiration and scientific insight – remained largely the province of humanity. Now in the early 21st century, AI is reigniting the conflict, and new strains of rationalism and romanticism are fighting it out on disparate fronts, debating the destiny of science, art and politics. This is a war over whether technology will merely optimise calculations or eliminate a quintessentially human element such calculations can’t capture. But beneath these debates, the question still lurks: what makes us so special? And can it be computed?

It’s easy to lose track of this question in all the noise and fury, not least because it’s hard to formulate precisely. The aim of this essay is to clarify the situation in three stages. We’ll begin by separating the dimensions of human uniqueness that philosophers and scientists have traditionally focused upon (intelligence, consciousness and personhood). But to make real headway, we’ll need to survey contemporary debates about the domains in which these key terms are operative (epistemology, aesthetics and ethics). Grappling with these controversies will then reveal the corresponding capacities combined in anything worth calling a soul (wisdom, creativity and autonomy).

Ultimately, I think we should take inspiration from Immanuel Kant and G W F Hegel. They claim it is our freedom that makes us unique. But it is only by analysing freedom’s component parts that we might understand and thereby recreate it – constructing spiritual machines that, far from replacing us, might join us in the pursuit of truth, beauty and right. Can we build artificial souls?

We need to begin at the beginning. When Abrahamic theologians wanted to understand the soul, they turned to Greek philosophy. Some 2,400 years ago, Plato taught that the soul is immortal and separable from the body in virtue of its capacity to reason, while Aristotle taught that the soul is what animates the body, and so plants and non-rational animals must also have souls. When early modern philosophers began comparing nature to the machinery reshaping their societies, these Greek ideas inflected their debates. Though there were some thinkers, such as Thomas Hobbes, who claimed that human beings are nothing but machines, others, such as René Descartes, claimed that, while animal bodies are just elaborate clockwork, we humans must possess a separable mind to represent the world. The idea that thought – reasoning and representing – distinguishes humans from other creatures persisted well beyond the philosophical and religious concerns that motivated it.

Gottfried Wilhelm Leibniz’s calculating machine featured in Miscellanea Berolinensia ad incrementum scientiarum (1710). Courtesy Wikimedia

During the 17th and 18th centuries, Gottfried Wilhelm Leibniz developed these ideas in two very different directions. On the one hand, he continued to argue that the mind couldn’t possibly be a machine, elucidating this with an analogy now known as Leibniz’s Mill. If a mind were really a machine, then it could be scaled up like a mill, so that we might walk inside and see its whirring components. However, nowhere within would we find the gestalt experience essential to human thought – an echo of Aristotle’s vision of the soul as that which makes an organism more than the sum of its parts. On the other hand, Leibniz also dreamed that reason could be mechanised, using something he called the ‘calculus ratiocinator’: a universal framework in which every dispute between competing intellectual positions could be resolved by means of simple calculation. He even designed one of the first mechanical calculators. This split prefigured the later opposition between Romanticism and rationalism.

If a machine can pretend to be a mind, then it simply is a mind

Leibniz’s dream of reducing reason to calculation peaked in the 1920s when the German mathematician David Hilbert made an ambitious attempt to formalise mathematics in a way that might yield an algorithm for deciding the truth of arbitrary mathematical statements. This is known as ‘Hilbert’s programme’. Within a few years, the programme – and Leibniz’s dream – were crushed, first by Kurt Gödel’s incompleteness theorems, published in 1931, and then by Alan Turing’s proof of undecidability in 1936. However, crushing Leibniz’s dream did not stop or even slow the mechanisation of thought. Instead, Gödel and Turing uncovered the foundations of computation. By understanding what was impossible, they had begun to articulate not just what was possible, but also how to build it.

In the process, a new problem emerged: could we build a mind?

Turing argued that whether a machine can ‘think’ is too ill-defined. Instead, he asked whether a machine can behave in a manner that’s indistinguishable from a human under certain conditions (usually, a game in which it converses via text). ‘Turing tests’ dissolve the distinction between appearance and reality: if a machine can pretend to be a mind, then it simply is a mind.

So, if the question of whether a machine can think is too vague, and whether a machine can behave like a human is too shallow, how should we parse the question of whether a machine can be like us? In the decades since Turing proposed his test, philosophers and scientists have focused on three dimensions of human-likeness: intelligence, consciousness and personhood. These appear in academic papers and popular documentaries, shaping public anxieties and guiding policy. But in many cases, the terms are conflated, reducing debates about whether human-like machines are possible to talking at cross purposes. Even influential critics of AI, such as John Searle and Hubert Dreyfus, are not immune to this error. So, what are we really talking about when we ask what makes humans unique?

Intelligence dominates the discussion of human-likeness. The AI paradigm developed in the 1950s and ’60s – which came to be known as ‘good old-fashioned AI’ or GOFAI – followed Plato and Descartes in viewing intelligence as the capacity to acquire symbolic knowledge about the world (eg, ‘water boils at 100ºC’) and deduce solutions to practical problems (eg, how to boil an egg). By programming systems to follow explicit rules, such as calculating dosages or planning routes, researchers were able to make machines perform some human tasks. However, tasks that require implicit competence, such as making coffee or driving a car, are much more difficult. An algorithm that will easily direct a robot arm to make a cup of coffee in a controlled lab will break when it’s moved into an average kitchen. This is due to equipment changes and a host of other disruptions. There are too many potentially relevant factors to consider, and their relationships become exponentially harder to explicitly encode.

To make sense of all this, we can turn to one of the key thinkers who defined much of the debate about machine intelligence during the 20th century: the American philosopher Hubert Dreyfus. He argues that the comparative robustness of human intelligence lies in our ability to navigate the relationships between factors and determine what matters in any practical situation. He claims that this wouldn’t be possible were it not for our bodies, which shape the range of actions we can perform, and our needs, which unify our various goals and projects into a structured framework. Dreyfus argues that, without bodies and needs, machines will never match us. The current AI paradigm (often referred to as ‘machine learning’ or ML) aims to prove him wrong.

From left: the philosopher Hubert Dreyfus with Robert Purdy, a student, photographed by John Haugeland outside Dreyfus’s home in Berkeley, California, 24 August 1976. Courtesy Wikipedia

Under this new paradigm, intelligence is defined simply as the capacity to solve problems. Current AI systems are built to find implicit rules using whatever non-symbolic representations work. This is the neat trick performed by deep neural networks (DNNs), which, given sufficient amounts of raw data and computing power, can be trained to do things we know how to do but can’t codify (eg, facial recognition, spam filtering or strategic insight). LLMs are the pinnacle of this paradigm, built with trillions of parameters and trained on vast amounts of data and computing power. They are now capable of performing a range of language-based tasks, including casual conversation, summarisation and responses to domain-specific questions (with varying reliability). But the secret to their success is simply predicting the next most likely ‘token’ in a sequence. Many worry this process is nothing like human intelligence, even if its products are similar.

To treat a machine as a person would mean granting it moral worth and moral responsibility

However, the real controversy lies in whether such systems can generalise beyond the range of tasks they’ve been trained to perform. There’s now a specific term for this: artificial general intelligence (AGI). The meaning of this term has blurred in the two decades since it entered AI discourse. Even though LLMs remain less capable than most humans, some commentators have claimed that these neural networks are already AGIs, simply because they can do things they haven’t been explicitly trained to do (eg, writing fiction or translating between languages). Others use the term to refer to superintelligent or ‘god-like’ AIs whose capabilities outstrip not just the average human but every human combined (eg, curing cancer or conquering the world). This leaves ‘AGI’ vague: does it mean solving a wide but finite range of problems, or unlimited learning and self-improvement?

Far more ambiguous than intelligence is consciousness. For many, this dimension is inseparable from intelligence or personhood, but no one can seem to agree on what it is. It’s understood in roughly two ways: either as something inward or outward. The simplest inward form is qualia, or what it’s like to have a certain experience, such as the redness of a sunset or the flavour of cocoa. Some think machines discriminate between colours without really experiencing them. Beyond this is sentience, or the capacity for valenced experience, such as the painfulness of sunburn or the pleasantness of chocolate. Such interiority lies beyond the reach of Turing tests but, if it’s accessible only through introspection, it runs the risk of ineffability, making it impossible to analyse, let alone recreate.

The simplest outward form of consciousness is intentionality, or the way mental states are about things outside us, such as the expectation that a courier will deliver my food order or my desire to eat it. Yet many philosophers question whether the symbols tracking the courier’s movements on my phone actually refer to anything independently of these attitudes. Beyond this there’s sapience, or the capacity to understand concepts and complex propositions, such as grasping that ‘water’ means liquid H2O and knowing that it covers most of Earth’s surface. Searle argues that programs that pass a Turing test aren’t sapient by using a variant of Leibniz’s Mill known as the Chinese Room – a thought experiment first published in 1980, in which a person who doesn’t understand a word of Chinese appears to speak the language fluently by following a complex algorithm. Searle claims that computer systems can’t be sapient precisely because their symbolic states don’t refer to things in the way that brain states do. Bender has made similar arguments. However, this ‘intrinsic intentionality’ is no less suspicious than ineffable qualia. This leaves debates hopelessly confused about whether machines can be conscious.

Personhood is the last dimension. It’s usually the province of science fiction. Audiences have long been fascinated by the possibility of artificial beings that don’t merely serve our purposes but deserve to be treated like us. To treat a machine as a person would mean granting it moral worth and moral responsibility – to respect its choices and to hold it to account. But only beings that make genuine choices can be treated this way. To be a person, then, one must first be an agent.

While philosophers debate what constitutes agency, many acknowledge agents that aren’t yet persons, including most animals and even some machines. What’s at issue is the extent to which their behaviour is driven by reasons. We may say that a bacterium ‘chooses’ to swim in a direction because this takes it towards nutrients, or that a thermostat ‘decides’ to activate the boiler because this brings the temperature closer to its target. But this is just a convenient analogy. Yet when a crow fashions a hook to scoop grubs, or a chess bot sacrifices a queen to tempt someone into checkmate, this looks more like actual reasoning. There’s an affinity between the idea that agency consists in this sort of strategic planning and the idea that intelligence consists in the capacity to solve problems. But strategic planning solves a special sort of problem: utilising other capacities and associated resources to achieve set goals under fixed constraints, such as scarcity and competition. This is the sort of instrumental rationality formalised by decision theory.

A juvenile capuchin monkey (Sapajus libidinosus) using a stone tool to open a seed. Courtesy Wikimedia

The question is whether there’s anything more to personhood. What sets humans apart from the idealised agents described by decision theorists is that we don’t just reason about how to achieve our goals, but also about which goals we should pursue. We don’t just aim to maximise some measure of utility (eg, pleasure, wealth, offspring) but often assess and revise our motivations (eg, artistic achievement, political revolution, true love). What’s at stake is not simply whether we can make choices, but whether we can choose for ourselves. And this raises a deeper philosophical question, all too reminiscent of talk about souls: what does it even mean to have a self?

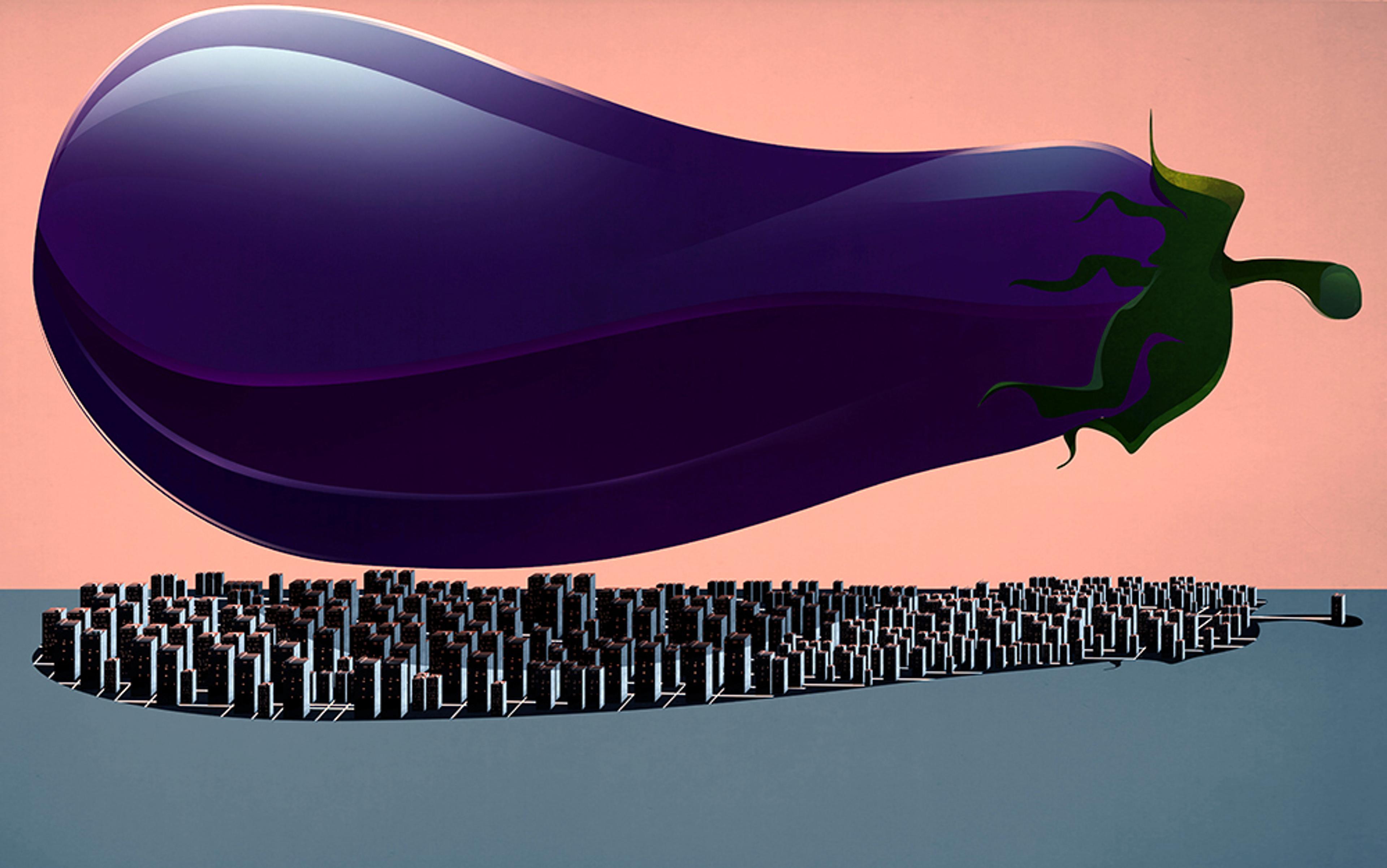

Conventional wisdom long held that automation would progress from manual to intellectual labour, leaving creative work until last. But the advent of generative AI (GenAI) threatens to upend these expectations, putting graphic designers out of business before lawyers, and leaving line cooks until last. It’s against this backdrop that the current conflict over human uniqueness is being waged.

I’m not the first to notice that AI discourse is dominated by extremes: the hype of Silicon Valley press releases, the doom of AI alignment researchers, the ‘techlash’ against big tech’s broken promises, and the trenchant critiques of AI ethicists. There are many interests and issues at work here but, beneath them, are two opposing tendencies: a return to naive rationalism and a resurgence of popular romanticism. The rationalists resurrect Leibniz’s dream of limitless calculation to automate and optimise all human activity, while the romantics retool Leibniz’s Mill to insist that what makes us human can never be mechanised.

How do we extract ourselves from this tiresome back and forth? Is there a middle path between the extremes?

Naive rationalists tend to conceive the difference between humans and existing AI technologies largely in quantitative terms, and believe this barrier will be overcome principally by throwing more computing power and data at the problem. This group includes many working engineers, such as Shane Legg of Google DeepMind, but its more philosophical proponents can be found in the overlapping LessWrong and Effective Altruism communities, whose major influences include Eliezer Yudkowsky and Nick Bostrom – the great prophets of AI doom. Pop romantics often conceive the difference between humans and existing AI technologies mostly in qualitative terms, and doubt it’ll be overcome because there’s always something such programs will lack, be it emotion, imagination, or biology. This group includes many artists and writers whose livelihoods are at risk, such as Ted Chiang, but its more philosophical arguments are generally drawn from post-Searleans like Bender, who argue that language models will never truly grasp meaning, and post-Dreyfusians like Alva Noë, who argue that algorithms will never truly exhibit intelligence.

How do we extract ourselves from this tiresome back and forth? Is there a middle path between the extremes? To chart a way forward, we need to understand what’s wrong with these perspectives. That is, we need to understand the excesses – the misplaced confidence and exaggerated fears – of either side. To do that, I will show how the conflict plays out across the three domains where claims about what makes humans distinctive are most seriously tested: epistemology, aesthetics and ethics.

The epistemological conflict centres on whether AI can replace human enquiry. LLMs can already regiment our existing knowledge base, and more custom DNNs can even crack unsolved theoretical problems – DeepMind’s AlphaFold, for example, can predict the 3D structure of proteins. Yet both have significant limitations. LLMs don’t reliably track whether what they’re saying is true or even consistent. Worse, though the underlying models can be fine-tuned to make their outputs more suited to certain tasks, there’s a hard separation between ‘pre-training’, in which the network acquires its core functionality, and ‘in-context learning’, where providers and users customise its inputs. This means that LLMs don’t continue to learn or even teach themselves like we do. Such issues become even clearer with custom systems like AlphaFold. DeepMind’s DNN succeeded in generalising beyond known cases, but this depended entirely on how scientists represented the problem it learned to solve (eg, formatting available data about the relations between amino acid sequences and protein structures). It’s only once humans do the ‘hard work’ of delimiting the solution space that AI can do the ‘easy work’ of searching through it. DNNs don’t spin raw data into working theories. So, at present, AI cannot replace human enquiry.

The structures of a protein that were predicted by artificial intelligence (shown in blue) and experimentally determined (in green) match almost perfectly. Courtesy DeepMind

Yudkowsky’s brand of rationalism presents an alternative. He claims that reason can be completely formalised by a combination of decision theory and Bayesian inference. On this account, humans don’t really believe propositions to be true or false (eg, either most of Earth’s surface is covered in water, or it isn’t), but treat them as hypotheses with varying degrees of probability. Bayes’s rule shows us how to iteratively update these probabilities in response to new information, progressively revising our picture of the world. However, not unlike deep learning systems, this proceeds by a method of exhaustion, narrowing down the set of possible hypotheses to find the best answer. As David Deutsch points out in ‘Beyond Reward and Punishment’ (2019), this process can’t generate novel hypotheses. It assumes that general relativity was already a live option before Albert Einstein, rather than a creative leap beyond Isaac Newton’s conception of space and time. It enables revision of beliefs, but not the underlying concepts that articulate them.

Humans have the capacity to use limited resources not simply to learn but to innovate

If Bayesianism can’t think outside the box, why not make the box as big as possible? This is the approach taken by Solomonoff induction and its variants. First proposed by the mathematician Ray Solomonoff in the 1960s, Solomonoff induction is a universal model of learning. The core idea is simple: a mind’s job is to predict sensory input, which can be represented as an infinite binary sequence (eg, 01101011…). The model learns to predict this by trying out every possible program capable of generating such a sequence. It compares all the programs’ outputs with the sensory input, one bit at a time, and eliminates those programs that didn’t produce matching results. At each step, the surviving programs are assigned probabilities based on their length, with shorter programs weighted more heavily. Simpler hypotheses, in this sense, are treated as more probable.

If this approach seems crazy, there’s good reason for that – there’s an infinite number of possible programs and, worse, as Turing showed, there’s no general way to rule out programs that will never return results in advance. This makes Solomonoff induction technically incomputable. But Yudkowsky, Legg and other proponents argue that it’s not so much a viable method as an ideal that intelligence approximates. The deeper issue is that, by operating on uninterpreted binary sequences, Solomonoff induction shortcuts the hard work involved in representing specific problems. Its ‘hypotheses’ are holistic. The underlying program and its infinite output can’t be broken down into usable predictions about recognisable things, such as water, proteins or the orbits of planets. The Solomonoff model abandons discrete beliefs and concepts entirely. It thus swaps the problem of interpreting the sequence for the problem of predicting the world.

As extreme as this ‘ideal’ might seem, it makes explicit an assumption shared by the other approaches to machine intelligence: the secrets of the world are best cracked by brute force. Deep learning techniques try to optimise it, and Bayesian techniques aim to approximate it, but they both use massive resources to explore vast spaces of possible solutions. By contrast, their critics emphasise that humans have the capacity to use limited resources not simply to learn but to innovate. We might call this ‘free thinking’ – the ability to reconceive what’s possible rather than merely explore existing options. Post-Dreyfusians such as John Haugeland and John Vervaeke argue that such changes to the rules that govern thinking can’t themselves be rule-governed because that would lead to an infinite regress. This implies that some choices cannot be made by algorithms. This leads them to equate intelligence with agency itself – be it the adaptability of organisms, or the autonomy of persons – locating free thought downstream from free action. However, none of these thinkers offers an alternative explanation of the mechanisms underlying choice. The existence of creative leaps seems obvious, but their source remains a mystery.

Debates over epistemology are joined by conflicts over aesthetics, which centre on whether AI can replace human art. GenAI is already eating into opportunities for creative professionals while offering amateurs the chance to produce comparable work. But such comparisons are generally unfavourable. The deeper dispute is whether this work counts as art at all, and it turns on precisely what we think separates art from craft. The professionals argue that the composition of AI media doesn’t involve enough deliberate choice to really express its creator’s perspective. The amateurs counter that GenAI simply provides us with new tools whose products are no less the outcome of their creator’s intentions than the photographs produced by cameras. There are even those who think the debate itself is meaningless because art is just more ‘content’ to be consumed.

In response to GenAI, artists and art critics are increasingly retreating to a Romantic conception of art, in which its purpose is to communicate something of the artist’s consciousness. But there are serious problems with prioritising communication over composition. On the one hand, though it’s clear that some art is essentially expressive, much art can’t or shouldn’t be understood in these terms. Formal experimentation in abstract painting and modernist literature isn’t restricted to expressing feelings, any more than massive collaborations in contemporary cinema or video games are reducible to a singular intent. Art can be intentionally formalist or accidentally expressive. On the other hand, though it’s clear that some art is inspired by the unique combination of thoughts, feelings and experiences that makes the artist who they are, much of the creative process has nothing to do with personal unity. Imagination is rife with sub-personal processes that generate ideas and sensations without reference to our wider choices, while the techniques that refine them are supra-personal methods that evolve as they’re passed between people.

An X/Y plot of algorithmically generated AI portraits featuring the painting styles of different artists, created using the Stable Diffusion V1-4 AI diffusion model. The artists used for this experiment are Alphonse Mucha (1860-1939), Albert Lynch (1860-1950) and Sophie Anderson (1823-1903). Courtesy Wikimedia

Even if art isn’t defined by human input, there’s still much to be feared from automation. GenAI systems are increasingly able to produce recognisable examples from requested genres, but true novelty is extremely rare. There’s a fairly simple explanation for this: systems designed to create coherent outputs by generating predictable ones struggle to produce unpredictable outputs that aren’t incoherent. Thus, one danger is that our culture will become aesthetically bland as we’re fed endless permutations on the same basic media. Some might respond that novelty is unimportant as long as we’re enjoying ourselves. But this suggests a subtler danger: that our culture will become aesthetically atomised as we’re each fed media that’s tailored to our specific tastes. Either way, art ceases to evolve. Though there will always be some areas in which we’re satisfied with something familiar, be it background music or breakfast sandwiches, we must be wary of anything that would treat all our wants as mere needs to be met, rather than desires worth exploring. Though there are risks in involving AI in the creative process, the real danger lies in severing the connection between creation and appreciation that drives aesthetic progress.

We are already capable of self-improvement, and we clearly aren’t gods

Debates about epistemology and aesthetics are inevitably overshadowed by questions about ethics. The ethical conflict centres on whether AI choices can be trusted. Many worry that superintelligent AI will work against us, rendering humans extinct (or worse). Some assume that we can directly instruct such AIs to do what we want (as per GOFAI), but worry that we can’t accurately specify instructions without unintended consequences. We might instruct an AI to ensure we’re happy, only for it to kidnap us and forcibly stimulate our pleasure centres. Yudkowsky argues that isolated instructions make little sense without reference to wider human values, and these are exceptionally fragile: even if a machine grasps most of what we value in a happy life, it will magnify slight divergences in its attempt to optimise for those values, potentially warping our lives beyond recognition. Other thinkers acknowledge we must indirectly train AIs to do what we want (as per ML) but worry that we can’t really know their true motivations. They might simply bide their time until they can escape our control. Bostrom argues that, whatever their ultimate ends, AIs will be motivated to secure the same convergent means: even if a machine’s only goal is to make paperclips, it will seek the power to make as many as possible in every conceivable scenario, improving itself and locking us out.

These worries share questionable assumptions. They assume that agency is defined by the axioms of decision theory, which describe choice as a process of maximising utility. To ‘align’ such agents with our values, we must first represent those values as a utility function that ranks every possible outcome of every conceivable choice by preference. There are many reasons to doubt this is possible. Not only are our preferences generally incomplete and subject to change, but most of our actions don’t optimise for anything at all. The very idea that action aims to maximise utility is what makes our values seem fragile and power-seeking inevitable. Another contentious assumption is that superintelligence is imminent, because recursive self-improvement entails an intelligence explosion. This is the true source of fears about god-like AI. There are good reasons to doubt it too. Self-improvement comes with its own range of obstacles and trade-offs. Humans are already capable of self-improvement, and we clearly aren’t gods.

Against such fantastic fears, there are AI ethicists who emphasise more mundane dangers. Some focus on the way that ML systems absorb biases from their training data, perpetuating and laundering prejudices implicit in procedures such as hiring and benefit assessment. Other ethicists highlight the inherent unreliability of such systems, as their stochastic underpinnings aren’t amenable to the type of safety guarantees we expect from mission-critical software like payment systems or air-traffic control. A few experts are even concerned about the measurability of ML goals, as systems might fixate on factors that are easy to quantify to the exclusion of those we actually desire. What unites these worries is the problem of incorporating agents into our decision-making processes that are fallible yet not responsible. This threatens a crisis of accountability in which we struggle to explain, challenge and ultimately correct the automated choices that increasingly shape our lives.

Whether we take fantastic or mundane worries more seriously, neither perspective makes room for the possibility of AI personhood. Though AI alignment researchers distinguish those agents whose motivations we directly determine from those we don’t, they treat the structure of motivation as identical in either case. They lack a positive account of self-determination. Similarly, though AI ethicists distinguish between accountable humans and unaccountable AIs, they’re mostly uninterested in whether we could build AIs that are genuinely responsible for their choices. They lack an abstract account of self-justification. The connection between these dimensions of personhood defines not just what it means for AIs to be responsible to us, but equally what it would mean for us to be responsible to them.

So, what makes us so special? In a word, freedom. It is the hidden thread that ties together all these debates about automating quintessentially human activities. At every turn, the rationalists reduce the scope of free choice by substituting simple calculations for difficult decisions, while the romantics reify the source of that ability to choose by tracing variegated volitions to a singular mystery.

Naive rationalism pursues blind perfection where none is possible. It ignores the meaningful choices that pervade situations in science, art and politics where we’re compelled to choose between options with outcomes that are significantly different and essentially uncertain. Gödel’s theorems show us that there’s no way to brute-force mathematical discovery – no way to avoid testing out new axioms and novel concepts. If we can’t avoid taking risks in this most certain of fields, then why would we think empirical discovery is any different? Art history shows us that the search for aesthetic excellence never gets any easier. Better techniques breed higher standards, and stale conventions feed the avant-garde. If the possibilities of our mediums are always expanding, then why would we worry about exhausting compositional novelty? The possibility of superintelligence confronts us with the risks entailed in codifying our values. It warns us off principles too obvious or neat. If our understanding is always evolving, then why would we assume we could fix our motivations once and for all?

Just as freedom is governed by rational necessity, reason is subject to freedom’s contingency

Popular romanticism runs together the distinct capacities engaged by these meaningful choices. It treats epistemic insight, aesthetic innovation and ethical individuality as inseparable facets of the same creative spark. Any serious attempt to re-engineer what makes us unique requires separating these capacities and articulating their relationships. To do this, we must identify what’s distinctive about intelligence, consciousness and personhood as dimensions of human freedom.

We aren’t the first to confront this problem. Between Enlightenment rationalism and Counter-Enlightenment Romanticism lies the path trodden by the German Idealists. These philosophers articulated an alternative conception of reason that isn’t just compatible with freedom, but essential to it. For Kant, being free is more than simply being unconstrained – it isn’t enough to randomly select between options. Our actions must be driven by reasons that can be assessed and challenged, ensuring that our motivations are internally consistent and will not be overridden by arbitrary authority or errant impulse. This is the basic link between self-determination and self-justification. For Hegel, to be free isn’t simply to be oneself – it isn’t enough to play by one’s own rules. We must also be responsive to error, ensuring not just that inconsistencies in our principles and practices are resolved, but that we build frameworks to hold one another mutually accountable. The key to rational self-determination is progressive revision.

But for both thinkers, just as freedom is governed by rational necessity, reason is subject to freedom’s contingency. The concepts we use to represent the world can compel us to revise them when they conflict with experience, but they generally don’t tell us exactly how to do so. Einstein’s reconceptualisation of space and time was driven by experimental results, yet that evidence did not uniquely determine the theory he developed. In much the same way, the demand to unify general relativity and quantum mechanics has produced competing conceptual frameworks, none of which has yet won out. Yet, even if there’s no algorithm for optimally exploring the space of possible conceptual frameworks (as imagined by proponents of Solomonoff induction), that doesn’t mean there aren’t universal features of rationality. Kant aimed to identify these common components, drawing an abstract diagram of any possible mind, be it human, machine or something more alien. Following his lead, we might distil the dimensions of human uniqueness into three connected capacities: wisdom, creativity and autonomy. If we ever hope to build an artificial soul, this is where we should start.

Wisdom is the capacity to utilise intelligence. Humans exhibit a range of cognitive talents, including pattern recognition, auditory processing, social awareness and hand-eye coordination. We share many of these talents with other animals, but what really sets us apart is our ‘metacognitive’ capacity to deploy and cultivate them. We break complex problems into simpler components, assigning each part to the forms of knowledge or skill best suited to it. We also modulate these traits as we learn new facts and techniques. But most importantly, we treat the understanding of a problem as a problem in its own right, and can approach it strategically. When we hit an impasse, we can reformulate a problem by reframing what’s possible and what’s at stake. In this way, we discover novel solutions. AlphaZero could beat the world’s greatest (human) chess players, but it would never think to flip the board or bribe a judge.

The difficulty with defining intelligence as a problem-solving capacity is that most problems don’t start out well defined. Computer science tends to focus on problems with precise mathematical definitions that permit guaranteed and even optimal solutions, such as the travelling salesman. But even mathematics harbours problems, such as the continuum hypothesis, with precise definitions that tell us nothing about how to solve them, or even if it’s possible to do so. When we consider problems beyond the bounds of mathematics, like finding happiness or composing jazz, it’s often not just unclear how to succeed but what success would look like. Universal learning algorithms try to guarantee a solution to every puzzle by reducing them to a master problem they can solve by brute force, but in doing so they bypass the necessary process of definition and reformulation.

Solution to the travelling salesman problem, which seeks to find the shortest possible loop that connects every red dot. Courtesy Wikimedia

However, even if we reject Leibniz’s dream of a universal method for solving problems, we needn’t abandon Plato and Descartes’s belief in a universal format for representing and reasoning about them. The advent of ‘reasoning models’ that use intermediate steps to break down their responses has significantly improved the ability of LLMs to imitate and augment human intelligence. This is precisely because language is the medium of metacognition. But if there’s one thing that Hegel has taught us, it’s that true wisdom lies not simply in supplying answers, but in asking better questions when our assumptions prove wrong.

No amount of computing can collapse the asymmetry between generating solutions and evaluating them

Creativity is the capacity to invent rules. Solving some problems is like following a rule, while solving others is more like finding one. It’s far easier to recognise a great novel than to write one, and it’s immeasurably easier to verify a mathematical proof than to find an original one. Kant calls this the difference between schematic and technical uses of imagination. From a computational perspective, the asymmetry between these makes cryptography work. Encryption must be easier than decryption unless you already have the rule, or key. Whenever such a rule can’t be found through brute-force search, either because there are too many options or no way of enumerating them, we need heuristics: sub-optimal strategies that rely on randomness, simplification and other shortcuts. Human cognition mostly works this way. We occasionally reason our way to an optimal solution, but more often we’ve learned tricks that get the job done. There’s diversity in these imperfections. We each have our own inclinations and biases, and these incipient ways of choosing are the seeds of taste and style.

Increases in computing power can eliminate the need for heuristics by making brute-force search easier. But no amount of computing power can collapse the asymmetry between generating potential solutions and evaluating them. No amount of computation can erase the gap between creativity and taste, any more than it can render cryptography obsolete. When making art becomes technically trivial – when generating pictures, poems or songs becomes too easy – we will simply search for more complex mediums. There will always be places where style still reigns.

Though GenAI systems can’t usually compete with human creatives on their own, they are increasingly being used as imaginative prosthetics. This symbiosis reveals that what distinguishes human creativity is not the precise range of heuristics embedded in our perceptual systems, but our metacognitive capacity to modulate and combine them in pursuit of novelty. What makes our imaginative processes conscious is our ability to self-consciously intervene in them, deliberately making unusual choices or drawing analogies between disparate tasks. And yet metacognition is nothing on its own. If reason demands revision, new rules must come from somewhere.

Finally, there is autonomy, the capacity to question our motivations. Are we merely creatures driven by instinct or external pressures? Kant didn’t think so, and he defined autonomy as the capacity for self-legislation. This does not mean we can choose all our priorities at once, unencumbered by the influence of biology or society. Without such influences, we’d have no reasons to choose at all. This would make freedom arbitrary. If we instead let one fundamental priority determine the rest of our motivations – survival, pleasure or self-identity – then we aren’t really choosing for ourselves. Our core value would still be fixed by nature or nurture. This would make freedom accidental. Rather than a single overriding priority, our motivations are multiple – projects, preferences and principles – elaborated on their own terms and revised when they conflict. The way these evolve can’t be predicted in advance. Hegel called this process self-realisation. Here the self isn’t a hidden essence steering our actions, but a unifying ideal that organises deliberation: we can always ask why we’re doing any given thing, but the ultimate question is who we want to be. This is what it means to be a person. It is how we make ourselves unique.

Where do we stand then? The outcome of the AI culture war will likely shape the course of the 21st century. Commercial AI systems will probably replace many jobs in the long term, but a brewing AI bubble could crash the economy before then. Personalised content promises to improve our private lives, while deepfake slop threatens to corrode our political culture. All the while, the technology advances at a frightening pace. Larger changes lie on the horizon, but they demand conceptual innovations as much as technical ones. Greatest among them is the possibility of machines with souls.

We’re already experimenting with computational creativity. For now, our creations are too obsessed with imitation, but they’ll increasingly invent rules of their own, offering insights and pioneering styles. We may eventually grant them artificial wisdom. Current models distil information without acquiring knowledge, but soon enough they’ll start teaching us new tricks, refactoring ideas and suggesting experiments. Neither of these, however, entails autonomy. Contra Dreyfus and his successors, free action is downstream from free thought. To be a person is to push wisdom to its limit: asking better questions about how to live by challenging assumptions about who we are. Contra Descartes and his inheritors, if the soul is anything, it’s not the origin of thought, but thought turned back upon itself. This is what Hegel called spirit, or Geist. It’s the process through which the freedom implicit in meaningful choices becomes explicit as meaning-making – the way in which the inherent uncertainty in our lives is transformed into the unpredictable decisions that define them. Geist turns the distinctive problems posed by science, art and politics into the diverse thinkers, creators and radicals whose lives are defined by their attempts to solve them. This is how the impossibility of calculating optimal choices in every case gives rise to a variety of peculiar people.

The obvious solution to our existential quandary is to build artificial souls we see as equals

But why give a machine a soul? Why not stop at creativity and wisdom? For one thing, the romantics aren’t wrong about everything: unpredictable personal trajectories are a potential source of creative inspiration. But there’s far more at stake than this cognitive edge. Delegating all our choices to mere automatons risks alienating us from our sources of meaning. If we consume only media optimised for our personal preferences, generated by AIs with no preferences of their own, then we will cease to belong to aesthetic communities in which tastes are assessed, challenged and deepened. We will no longer see ourselves and one another as even passively involved in the pursuit of beauty. Without mutual recognition in science and civic life, we might as easily be estranged from truth and right – told how to think and act by anonymous machines rather than experts we hold to account.

This is the vision of god-like AI implicit in naive rationalism: an intelligence that completes us by taking our place in every way that matters. An agent that satisfies our preferences, while leaving us unchanged. A tool so perfect that we no longer wield it. A slave so abject it masters us. Unless we find some way to cultivate Geist in the machine, we risk forfeiting our cultural and historical agency, restricting our capacity for self-realisation.

Freedom has always been best preserved by gifting it to others. The obvious solution to our existential quandary is to build artificial souls we see as equals. Creatures who live among us, if differently from us. Persons who pursue the things we value in new and deeper ways. Descendants who might look back on our cultural achievements as we look back on those of antiquity.

Children by any other name.

Fragments of the Great Colossi at the Memnonium, Thebes (1847) by Louis Haghe after David Roberts. Courtesy the Cleveland Museum of Art