Twenty years ago, a pair of psychologists hooked up a shoe to a computer. They were trying to teach it to tap in time with a national anthem. However, the job was proving much tougher than anticipated. Just moving to beat-dominated music, they found, required a grasp of tonal organisation and musical structure that seemed beyond the reach of an ordinary person without special training. But how could that be? Any partygoer can fake a smile, reach for a cheese cube and tap her heel to an unfamiliar song without so much as a thought. Yet when the guy she’s been chatting with tells her that he’s a musician, she might reply: ‘Music? I don’t know anything about that.’

Maybe you’ve heard a variation on this theme: ‘I can’t carry a tune to save my life.’ Or: ‘I don’t have a musical bone in my body.’ Most of us end up making music publicly just a few times a year, when it’s someone’s birthday and the cake comes out. Privately, it’s a different story – we belt out tunes in the shower and create elaborate rhythm tracks on our steering wheel. But when we think about musical expertise, we tend to imagine professionals who specialise in performance, people we’d pay to hear. As for the rest of us, our bumbling, private efforts — rather than illustrating that we share an irresistible impulse to make music — seem only to demonstrate that we lack some essential musical capacity.

But the more psychologists investigate musicality, the more it seems that nearly all of us are musical experts, in quite a startling sense. The difference between a virtuoso performer and an ordinary music fan is much smaller than the gulf between that fan and someone with no musical knowledge at all. What’s more, a lot of the most interesting and substantial elements of musicality are things that we (nearly) all share. We aren’t talking about instinctive, inborn universals here. Our musical knowledge is learned, the product of long experience; maybe not years spent over an instrument, but a lifetime spent absorbing music from the open window of every passing car.

So why don’t we realise how much we know? And what does that hidden mass of knowledge tell us about the nature of music itself? The answers to these questions are just starting to fall into place.

The first is relatively simple. Much of our knowledge about music is implicit: it only emerges in behaviours that seem effortless, like clapping along to a beat or experiencing chills at the entry of a certain chord. And while we might not give a thought to the hidden cognitions that made these feats possible, psychologists and neuroscientists have begun to peek under the hood to discover just how much expertise these basic skills rely on. What they are discovering is that musicality emerges in ways that parallel the development of language. In particular, the capacity to respond to music and the ability to learn language rest upon an amazing piece of statistical machinery, one that keeps whirring away in the background of our minds, hidden from view.

Consider the situation of infants learning to segment the speech stream – that is, learning to break up the continuous babble around them into individual words. You can’t ask babies if they know where one word stops and a new one begins, but you can see this knowledge emerge in their responses to the world around them. They might, for example, start to shake their heads when you ask if they’d like squash.

To investigate how this kind of verbal knowledge takes shape, in 1996 the psychologists Jenny Saffran, Richard Aslin and Elissa Newport, then all at the University of Rochester in New York, came up with an ingenious experiment. They played infants strings of nonsense syllables – sound-sequences such as bidakupado. This stream of syllables was organised according to strict rules: da followed bi 100 per cent of the time, for example, but pa followed ku only a third of the time. These low-probability transitions were the only boundaries between ‘words’. There were no pauses or other distinguishing features to demarcate the units of sound.

It has long been observed that eight-month-old infants attend reliably longer to stimuli that are new to them. The researchers ran a test that took advantage of this peculiar fact. After the babies had been exposed to this pseudolanguage for an extended period of time, the psychologists measured how long babies spent turning their heads toward three-syllable units drawn from the stream. The babies tended to listen only briefly to ‘words’ (units within which the probability of each syllabic transition had been 100 per cent) but to stare curiously in the direction of the ‘non-words’ (that is, units which included low-probability transitions). And since absolutely the only thing distinguishing words from non-words within this onslaught of gibberish was the transition probabilities from syllable to syllable, the infants’ reactions revealed that they had absorbed the statistical properties of the language.

Play a simple Do-Re-Mi-Fa-Sol-La-Ti but withhold the final Do and watch even the most avowed musical ignoramus squirm

This ability to track statistics about our environment without knowing we’re doing so turns out to be a general feature of human cognition. It is called statistical learning, and it is thought to underlie our earliest ability to understand what combinations of syllables count as words in the complex linguistic environment that surrounds us during infancy. What’s more, something similar seems to happen with music.

In 1999, the same authors, working with their colleague Elizabeth Johnson, demonstrated that infants and adults alike track the statistical properties of tone sequences. In other words, you don’t have to play the guitar or study music theory to build up a nuanced sense of which notes tend to follow which other notes in a particular repertoire: simply being exposed to music is enough. And just as a baby cannot describe her verbal learning process, only revealing her achievement by frowning at the word squash, the adult who has used statistical learning to make sense of music will reveal her knowledge expressively, clenching her teeth when a particularly fraught chord arises and relaxing when it resolves. She has acquired a deep, unconscious understanding of how chords relate to one another.

It’s easy to test out the basics of this acquired knowledge on your friends. Play someone a simple major scale, Do-Re-Mi-Fa-Sol-La-Ti, but withhold the final Do and watch even the most avowed musical ignoramus start to squirm or even finish the scale for you. Living in a culture where most music is built on this scale is enough to develop what seems less like the knowledge and more like the feeling that this Ti must resolve to a Do.

Psychologists such as Emmanuel Bigand of the University of Burgundy in France and Carol Lynne Krumhansl of Cornell University in New York have used more formal methods to demonstrate implicit knowledge of tonal structure. In experiments that asked people to rate how well individual tones fitted with an established context, people without any training demonstrated a robust feel for pitch that seemed to indicate a complex understanding of tonal theory. That might surprise most music majors at US universities, who often don’t learn to analyse and describe the tonal system until they get there, and struggle with it then. Yet what’s difficult is not understanding the tonal system itself – it’s making this knowledge explicit. We all know the basics of how pitches relate to each other in Western tonal systems; we simply don’t know that we know.

Studies in my lab at the University of Arkansas have shown that people without any special training can even hear a pause in music as either tense or relaxed, short or long, depending on the position of the preceding sounds within the governing tonality. In other words, our implicit understanding of tonal properties can infuse even moments of silence with musical power. And it’s worth emphasising that these seemingly natural responses arise after years of exposure to tonal music.

When people grow up in places where music is constructed out of different scales, they acquire similarly natural responses to quite different musical elements. Research I’ve done with Patrick Wong of Northwestern University in Illinois has demonstrated that people raised in households where they listen to music using different tonal systems (both Indian classical and Western classical music, for example) acquire a convincing kind of bi-musicality, without having played a note on a sitar or a violin. So strong is our proclivity for making sense of sound that mere listening is enough to build a deeply internalised mastery of the basic materials of whatever music surrounds us.

Other, subtler musical accomplishments also seem to be widespread in the population. By definition, hearing tonally means hearing pitches in reference to a central governing pitch, the tonic. Your fellow partygoers might start a round of Happy Birthday on one pitch this weekend and another pitch the next, and the reason both renditions sound like the same song is that each pitch is heard most saliently not in terms of its particular frequency, but in terms of how it relates to the pitches around it. As long as the pattern is the same, it doesn’t matter if the individual notes are different. This capacity to hear these patterns is called relative pitch.

Relative pitch is a commonplace skill, one that develops naturally on exposure to the ordinary musical environment. People tend to invest more prestige in absolute pitch, because it’s rare. Shared by approximately 1 in 10,000 people, absolute pitch is the ability to recognise not a note’s relations to its neighbours, but its approximate acoustic frequency. People with absolute or ‘perfect’ pitch can tell you that your vacuum cleaner buzzes on an F# or your doorbell starts ringing on a B. This can seem prodigious. And yet it turns out not to be so far from what the rest of us can do normally.

A number of studies have shown that many of the other 9,999 people retain some vestige of absolute pitch. The psychologists Andrea Halpern of Bucknell University in Pennsylvania and Daniel Levitin of McGill University in Quebec both independently demonstrated that people without special training tend to start familiar songs on or very near the correct note. When people start humming Hotel California, for example, they do it at pretty much the same pitch as the Eagles. Similarly, E Glenn Schellenberg and Sandra Trehub, psychologists at the University of Toronto, have shown that people without special training can distinguish the original versions of familiar TV theme songs from versions that have been transposed to start on a different pitch. ‘The Siiiiiimp-sons’ just doesn’t sound right any other way.

It looks, then, like pitch processing among ordinary people shares many qualities with those gifted 1-in-10,000. Furthermore, from a certain angle, relational hearing might be more crucial to our experience of music. Much of the expressive power of tonal music (a category that encompasses most of the music we hear) comes out of the phenomenological qualities – tension, relaxation, and so on – that pitches seem to possess when we hear them relationally.

Musical sensitivity depends on the ability to abstract away the surface characteristics of a pitch and hear it relationally, so that a B seems relaxed in one context but tense in another. So, perhaps the ‘only-prodigies-need-apply’ reputation of absolute pitch is undeserved at the same time that its more common relative is undervalued. Aspects of the former turn out to be shared by most everyday listeners, and relative pitch seems more critical to how we make sense of the expressive aspects of music.

Another vastly undervalued skill is just tapping along to a tune. When in 1994 Peter Desain of Radboud University in the Netherlands and Henkjan Honing of the University of Amsterdam hooked up a shoe to a computer, they found what many studies since have demonstrated: that to get a computer to find the beat in even something as plodding and steady as most national anthems you have to teach it some pretty sophisticated music theory.

For example, it has to recognise when phrases start and stop, and which count as repetitions of others, and it has to understand which pitches are more and less stable in the prevailing tonal context. Beats, which seem so real and evident when we’re tapping them out on the steering wheel or stomping them out on the dance floor, are just not physically present in any straightforward way in the acoustic signal.

So what is a beat, really? We experience each one as a specially accented moment separated from its neighbours by equal time intervals. Yet the musical surface is full of short notes and long notes, low notes and high notes, performed with the subtle variations in microtiming that are a hallmark of expressive performance. Out of this acoustic whirlwind, we generate a consistent and regular temporal structure that is powerful enough to make us want to move. Once we’ve grasped the pattern, we’re reluctant to let it go, even when accents in the music shift. It’s this tenacity that makes the special force of syncopation – off-beat accents – possible. If we simply shifted our perception of the beat to conform with the syncopation, it would just sound like a new set of downbeats rather than a tense and punchy contrary musical moment, but our minds stubbornly continue to impose a structure with which the new accents misalign.

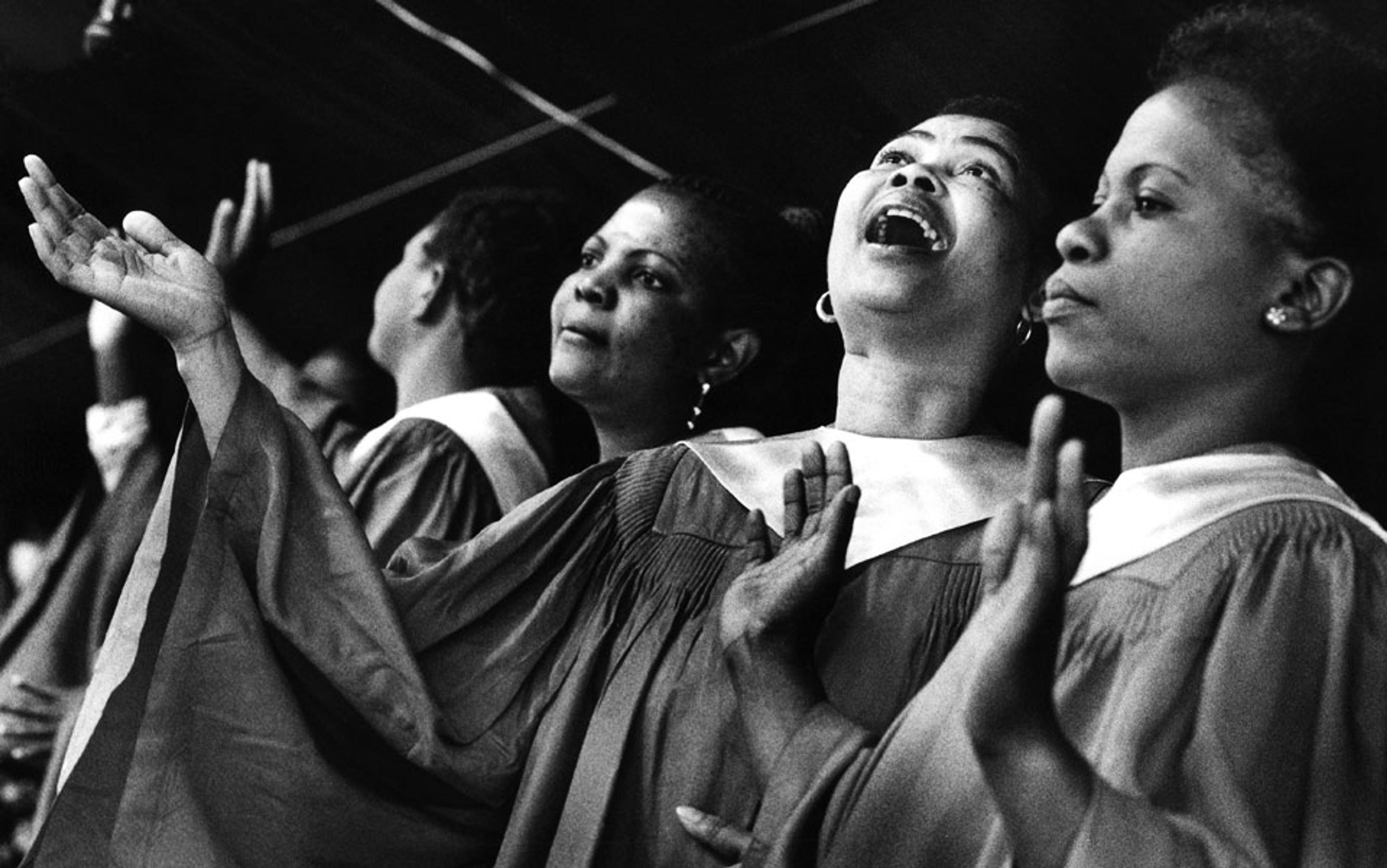

concerts, dance clubs and religious services where lots of people move together to a beat create a powerful sense of bonding

In other words, perception of rhythm depends on a more general ability to synchronise with our surroundings. But this raises the question of why we might have such an ability in the first place. It seems telling that it emerges very early in parent-infant interactions. People tend to speak in specific ways to their babies: slowly, with exaggerated pitch contours, extra repetition, and regular timing (‘so big… so big… sooooooo big’). All of these are more typical of music than in ordinary speech. And it seems that these modifications help infants to engage predictively with the speech stream, anticipating what’s going to happen next, and ultimately to insert their coo or eyebrow lift at precisely the right moment to generate a sense of shared temporal orientation between parent and child, an experience that contributes to the powerful bond between them.

Music piggybacks on this ability, using it to choreograph experiences of shared temporal attention, sometimes among large groups of people. Experiences at concerts, dance clubs and religious services where lots of people move together to a beat often create a powerful sense of bonding. When music allows us to perceive time in a shared way, we sense our commonality with others more strongly.

And so the capacity to track a beat, which might seem trivial on first glance, in fact serves a larger and more significant social capacity: our ability to attend jointly in time with other people. When we feel in sync with partners, as reflected in dancing, grooving, smooth conversational turn-taking and movements aligned to the same temporal grid, we report these interactions as more satisfying and the relationships as more significant. The origins of the ability to experience communion and connection through music might lie in our very earliest social experiences, and not involve a guitar or fiddle at all.

It has often been observed that there is a special connection between music and memory. This is what allows a song such as Tom Lehrer’s The Elements (1959) to teach children the periodic table better than many chemistry courses. You don’t need to have any special training to benefit from the memory boost conferred by setting a text to music – it just works, because it’s taking advantage of your own hidden musical abilities and inclinations. Music can also absorb elements of autobiographical memory – that’s why you burst into tears in the grocery store when you hear the song that was playing when you broke up with your boyfriend. Music soaks up all kinds of memories, without us being aware of what’s happening.

What’s less well-known is that the relationship goes both ways: memory also indexes music with astonishing effectiveness. We can flip through a radio dial or playlist at high speed, almost immediately recognising whether we like what’s playing or not. In 2010, the musicologist Robert Gjerdingen of Northwestern University in Illinois showed that snippets under 400 milliseconds – literally the blink of an eye – can be sufficient for people to identify a song’s genre (whether it’s rap, country or jazz), and last year Krumhansl showed that snippets of similar length can be sufficient for people to identify an exact song (whether it’s Public Enemy’s Fight the Power or Billy Ray Cyrus’s Achy Breaky Heart). That isn’t long enough for distinctive aspects of a melody or theme to emerge; people seem to be relying on a robust and detailed representation of particular textures and timbral configurations – elements we might be very surprised to learn we’d filed away. And yet we can retrieve them almost instantly.

just by living and listening, we have all acquired deep musical knowledge

That fact becomes both more and less amazing when you consider just how steeped in music we all are. If all the exposure in elevators and cafés and cars and televisions and kitchen radios was put together, the average person listens to several hours of music every day. Even when it isn’t playing, music continues in our minds – more than 90 per cent of us report being gripped by a stubborn earworm at least once a week. People list their musical tastes on dating websites, using them as a proxy for their values and social affiliations. They travel amazing distances to hear their favourite band. The majority of listeners have experienced chills in response to music: actual physical symptoms. And if you add some soaring strings to an otherwise ordinary scene in a film, it might bring even the hardiest of us to tears.

So, the next time you’re tempted to claim you don’t know anything about music, pause to consider the substantial expertise you’ve acquired simply through a lifetime of exposure. Think about the many ways this knowledge manifests itself: in your ability to pick out a playlist, or get pumped up by a favourite gym song, or clap along at a performance. Just as you can hold your own in a conversation even if you don’t know how to diagram a sentence, you have an implicit understanding of music even if you don’t know a submediant from a subdominant.

In fact, for all its remarkable power, music is in good company here. Many of our most fundamental behaviours and modes of understanding are governed by similarly implicit processes. We don’t know how we come to like certain people more than others; we don’t know how we develop a sense of the goals that define our lives; we don’t know why we fall in love; yet in the very act of making these choices we reveal the effects of a host of subterranean mental processes. The fact that these responses seem so natural and normal actually speaks to their strength and universality.

When we acknowledge how, just by living and listening, we have all acquired deep musical knowledge, we must also recognise that music is not the special purview of professionals. Rather, music professionals owe their existence to the fact that we, too, are musical. Without that profound shared understanding, music would have no power to move us.